Reproducibility and transparency in academia, and implications for statistical agencies

2025-10-09

Follow along

larsvilhuber.github.io/transparency-statistical-agencies/ (HTML zipped, PDF)

Goals of my talk

What is the state of reproducibility and transparency in academic economics?

What are the benefits of reproducibility and transparency?

Increasing broad consensus in academia

What are the implications for statistical agencies?

Reproducibility in Economics

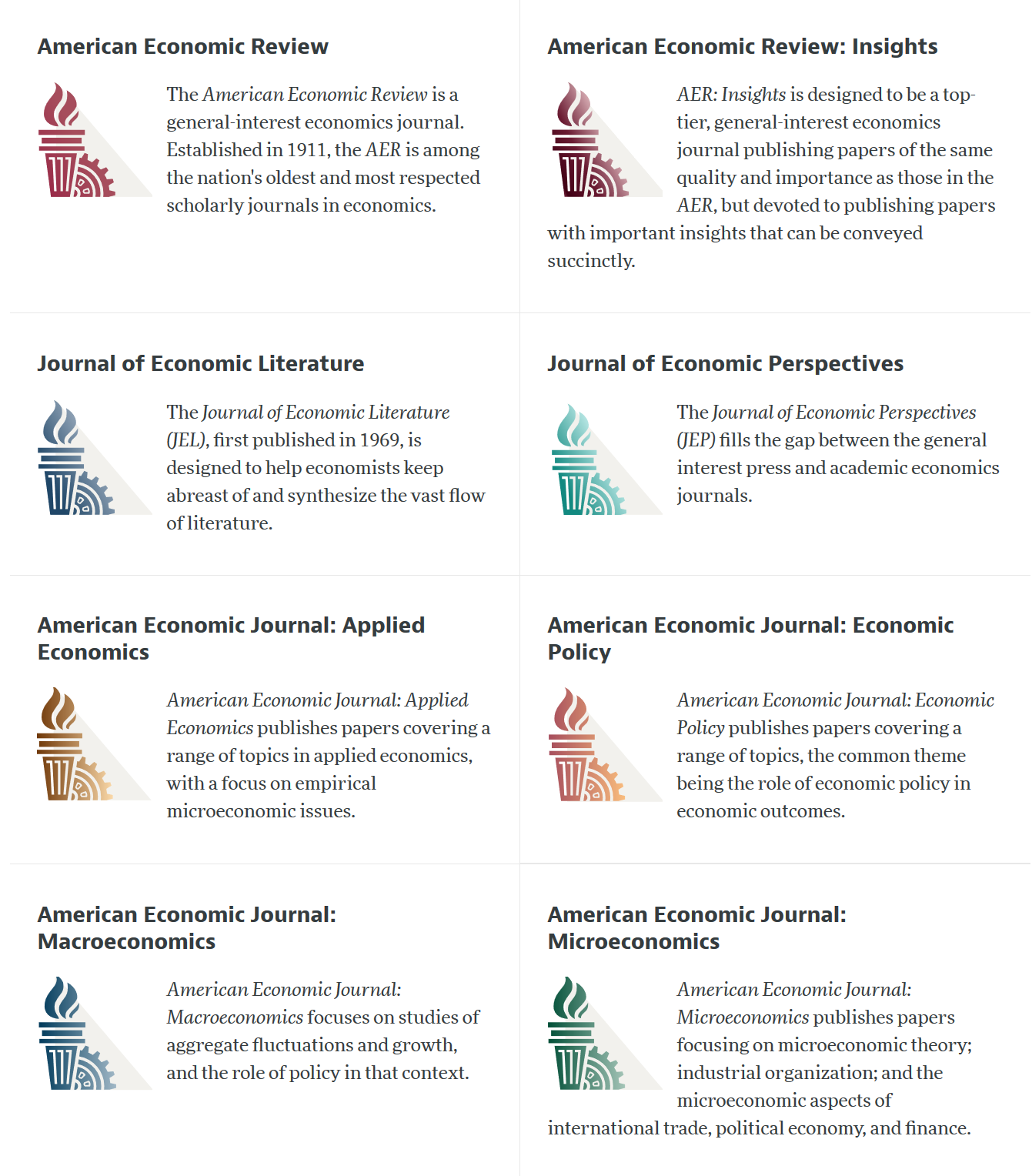

AEA Journals

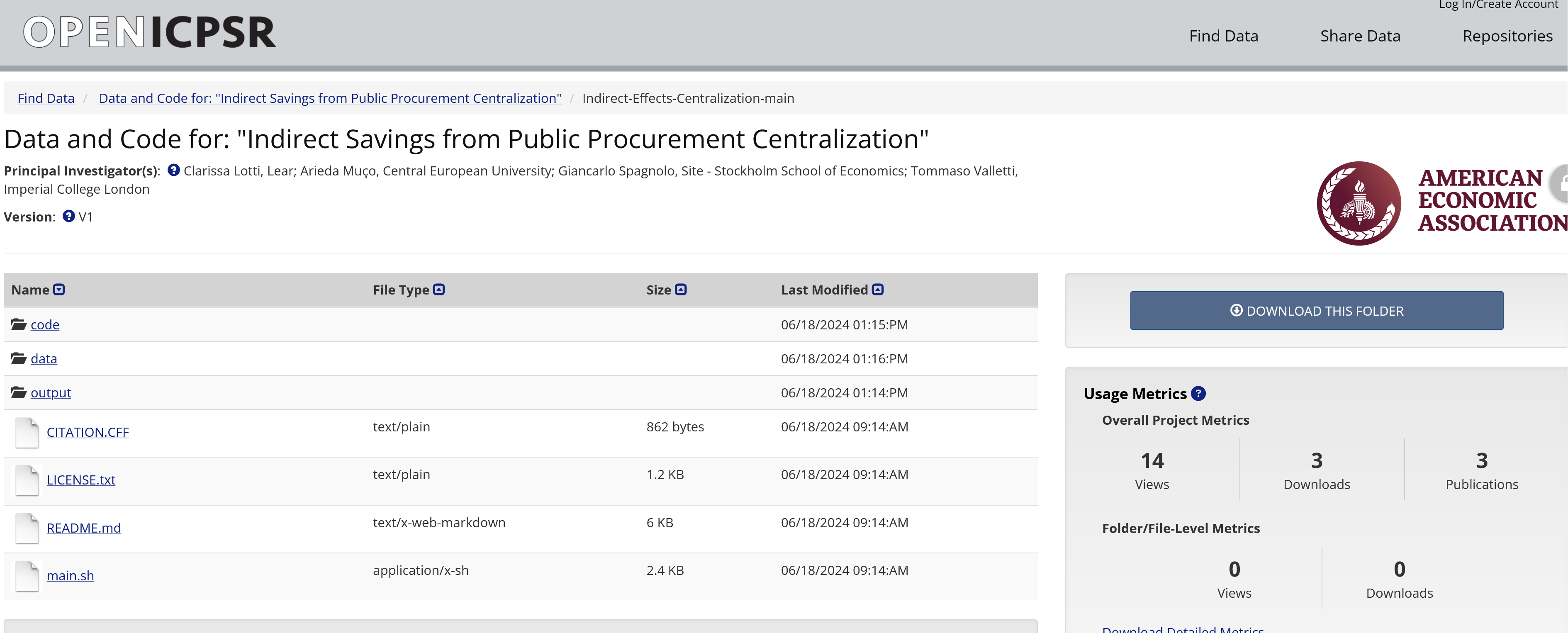

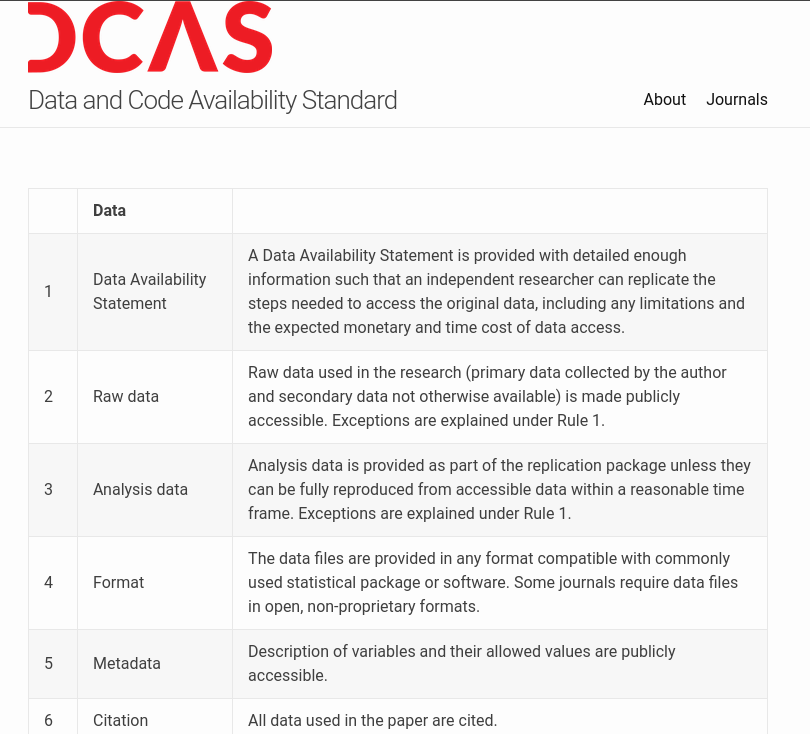

What is a replication package?

AEA policy

Tenets of the Policy

- Transparency

- Completeness

- Preservation

Transparency

- Provenance of the data

- Processing of the data, from raw data to results (code)

It is the policy of the American Economic Association to publish papers only if the data used in the analysis are clearly and precisely documented and access to the data and code is clearly and precisely documented and is non-exclusive to the authors.

Completeness

- All data needs to be identified and and access described

- All code needs to be described and provided

Authors … must provide, prior to acceptance, the data, programs, and other details of the computations sufficient to permit replication

Preservation

- All data needs to be preserved for future replicators

- Ideally, within the replication package, subject to ToU, for convenience

- Otherwise, in a trusted repository

Preservation

- Code must be in a trusted repository

- Usually, within the replication package

- Websites, Github, are not acceptable

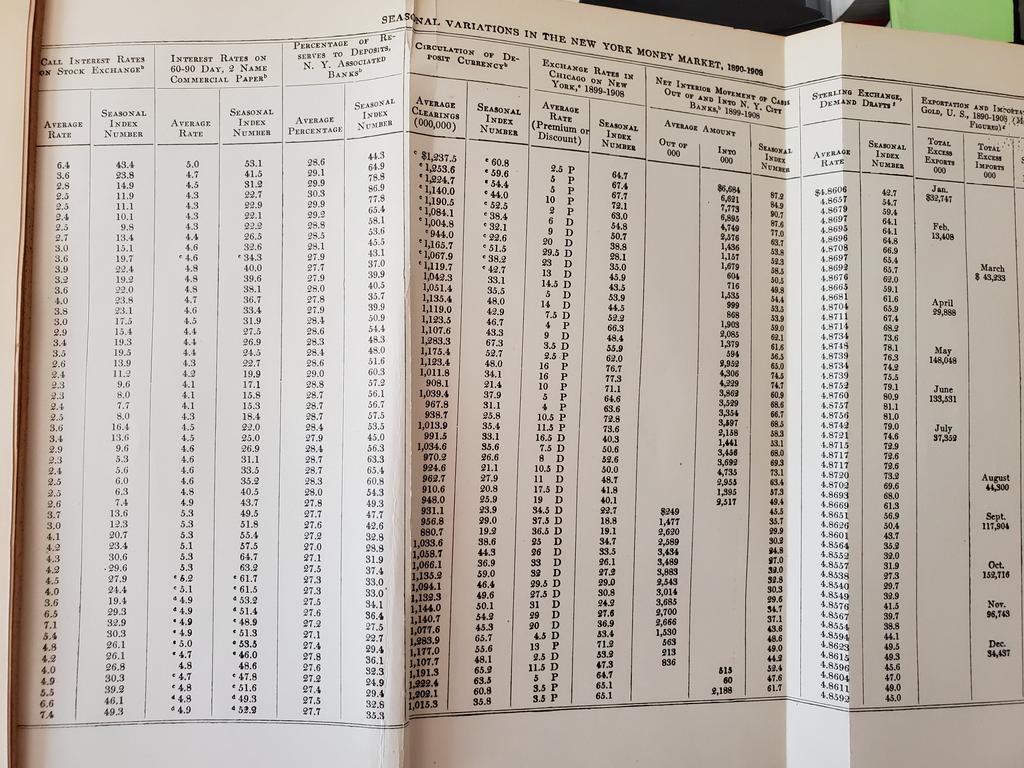

Historically

AER 2011 thanks to Stefano Dellavigna

Modern preservation

Side note: Government

- Data are often confidential

- Are they preserved? (NARA, otherwise)

- Are they accessible to others? (FSRDC, NORC, etc.)

- Code is sometimes deemed “confidential”

- We will return to this topic!

Exceptions to the Policy

None

…

… there is a grey zone:

- When data do not belong to researcher, no control over preservation, access!

- Sometimes, ToU prevent researcher from revealing metadata (name of company, location)

Transparency again

- However:

- No exception for need to describe access (own and other)

- No exception for need to fully describe processing (possibly with redacted code)

Enforcement of the AEA Policy

Reproducibility?

Reproducibility

“Reproducibility” refers to the ability of a researcher to duplicate the results of a prior study using the same materials and procedures as were used by the original investigator.” 1

Testing for …

- Transparency

- Completeness

through reproducibility

Criteria: Transparency?

- Can a reasonable person understand the description of acquisition of data and processing via code?

Criteria: Completeness?

- Do the provided materials allow to reproduce all the tables and figures in the paper?

Who is the target person?

Student replicators

Who is the target person?

Over the past 6 years, over 170 undergraduate students have been involved in verifying these articles.

- Economics, biostatistics, sociology

- Typically recruited in sophomore or junior year, but will consider freshmen through master’s students

Who is the target person?

- You (in 4 years, between prepping 2 new courses, an R&R, a new child, and tenure coming up in 2 years)

- Your RA (in 4 years, because you are… see above)

- Your future readers who will cite you (in 4-10 years, who may want to extend or replicate your study, but won’t if it is too complex)

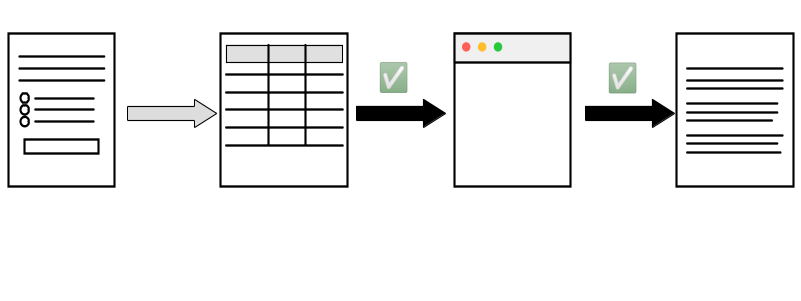

Tracing inputs from outputs

Reproducibility in Economics and beyond

Data Editors

- American Economic Association (8)

- Econometric Society (3)

- Canadian Journal of Economics (1)

- Royal Economic Society (2)

- Western Economic Association International (1)

- European Economic Association (1)

- Review of Economic Studies (1)

- Journal of the European Economic Association (1)

- Journal of Political Economy (3)

Common policies

https://social-science-data-editors.github.io/

Elsewhere: Political Science

Elsewhere: Sociology

Trust in Government Statistics

United Nations

Fundamental Principles of Official Statistics, Principle 3:

Accountability and Transparency To facilitate a correct interpretation of the data, the statistical agencies are to present information according to scientific standards on the sources, methods and procedures of the statistics 5

National Academies

Principles and Practices for a Federal Statistical Agency, Principle 2:

Credibility among Data Users A federal statistical agency must have credibility with those who use its data and information 6

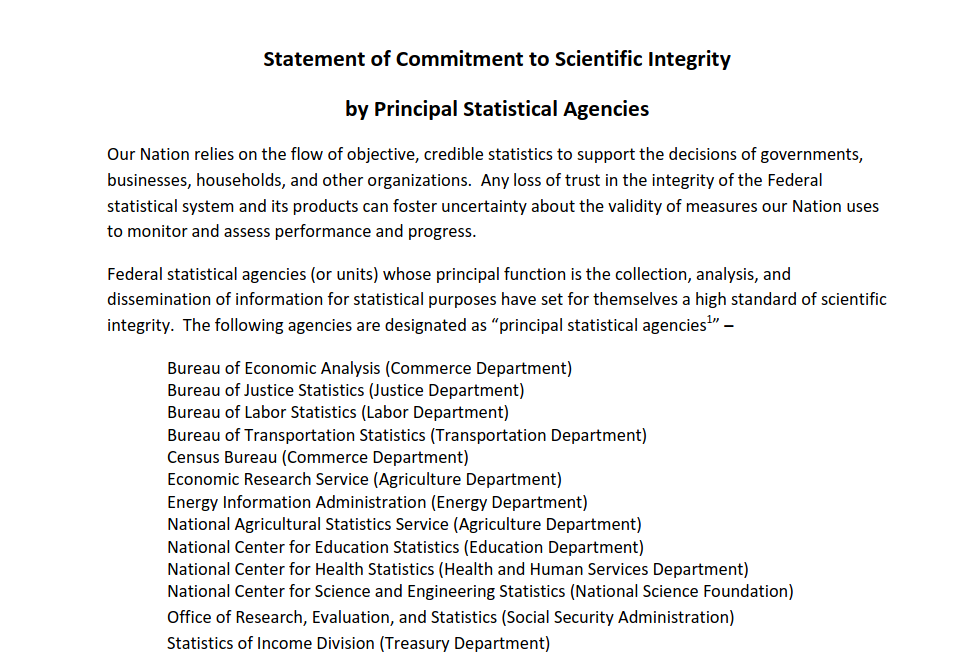

OMB

“… flow of objective, credible statistics to support the decisions of individuals, households, governments, businesses, and other organizations.”

OMB

Statistical Policy Directive No. 1, 4:

“Any loss of trust in the integrity of the Federal statistical system and its products could lessen respondent cooperation with Federal statistical surveys, decrease the quality of statistical system products, and foster uncertainty about the validity of measures our Nation uses to monitor and assess its performance and progress.”

Agency efforts

Agency efforts

Joint Statement

Joint Statement on Commitment to Scientific Integrity and Transparency

- Principle 2: a Federal statistical agency must have credibility with those who use its data and information;

- Principle 3: a Federal statistical agency must have the trust of those whose information it obtains;

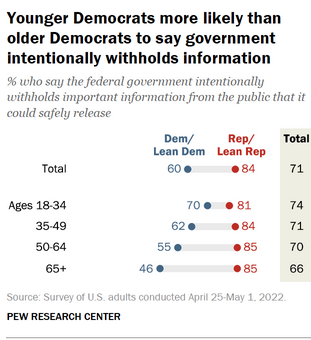

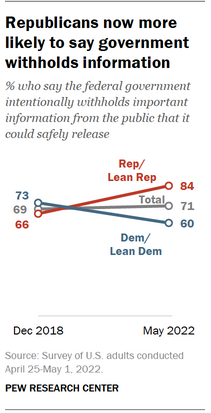

Waning trust

Computational Reproducibility and Official Statistics

Agencies do provide detailed information on sources

- Surveys

- Administrative data

Computational Reproducibility and Official Statistics

But: Availability of “computing instructions”?

- Code for cleaning, aggregation, imputation

- Including for disclosure avoidance

Computational Reproducibility and Official Statistics

But: Availability of reliable, trusted data archives

- Of released data – ability to reproduce downstream uses

- Of source data – ability to reproduce released data

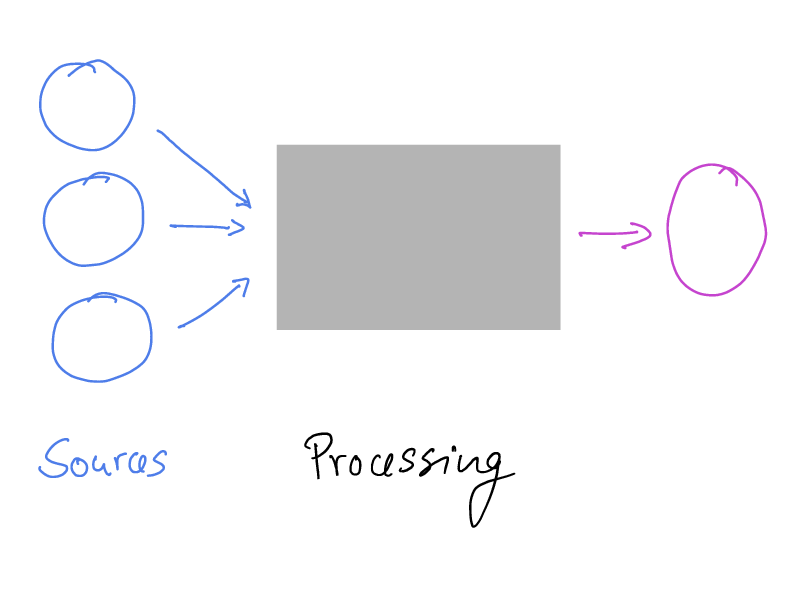

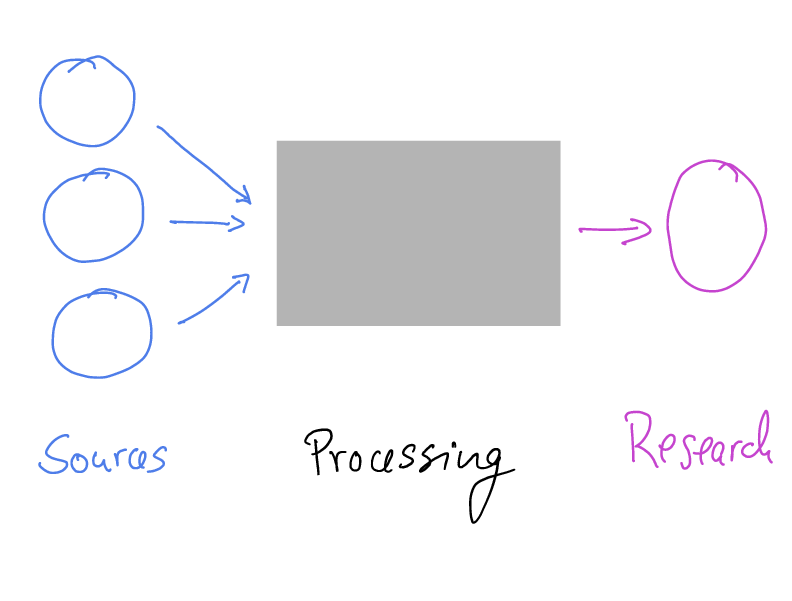

The analogy

The analogy

The analogy

Some principles from the academic world

Which are starting to be infused into the federal system

FAIR Principles

FAIR:

- Findable

- Accessible

- Interoperable

- Reusable

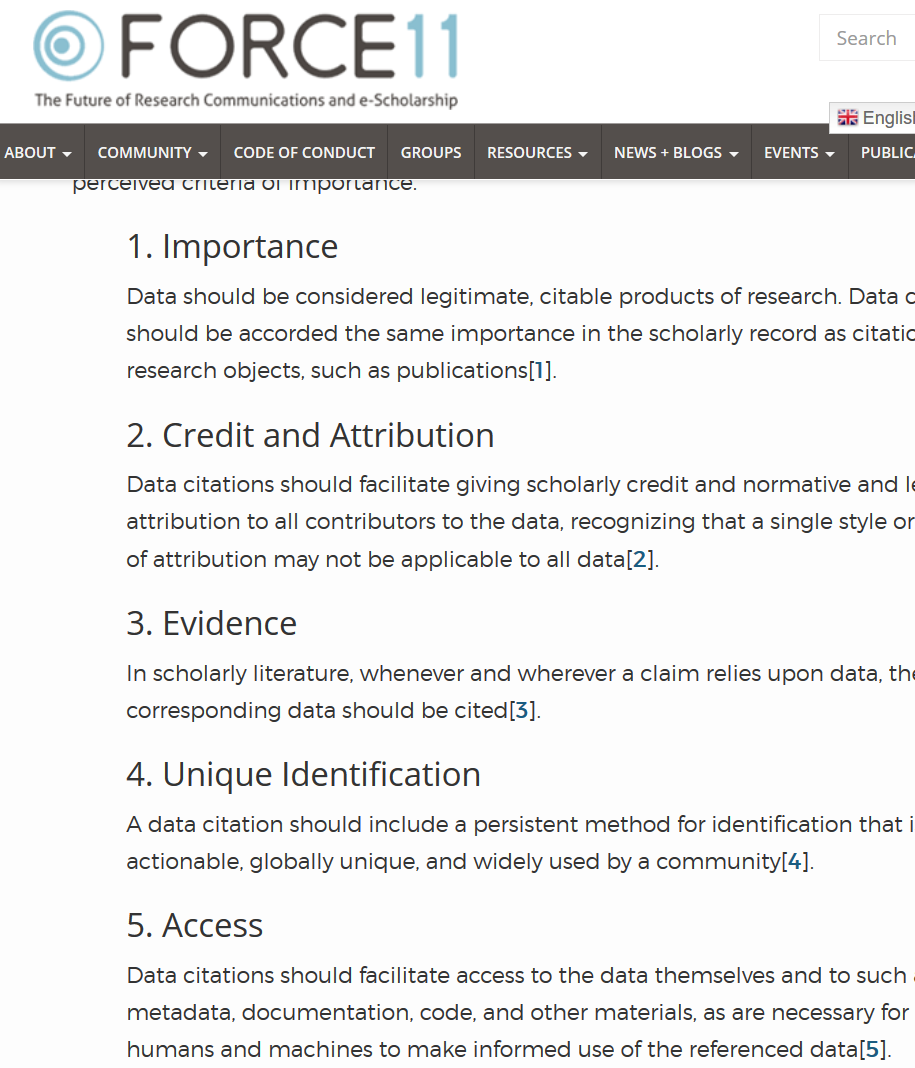

Data Citation Principles

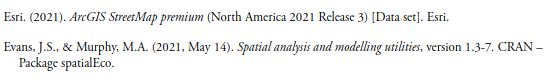

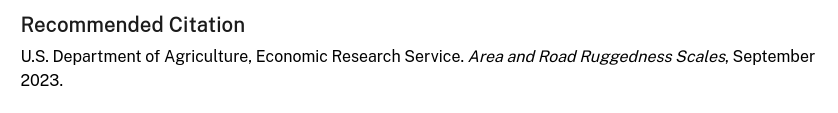

An example from ERS

Website

Website

Sources

High-level description of sources

Sources

Sources are cited!

Methods

High-level description of methods, but no (obvious) code

Methods

Some methods - R code - is cited

Own citation?

Own citation does not include a URL

Findability?

Findability?

Not even close.

Repeatability of downloads?

URL is

https://ers.usda.gov/webdocs/DataFiles/107356/RuggednessScale2010tracts.xlsx?v=6316.8

- What will the 2020 tract-based data URL look like?

Reproducibility?

- Most of the data inputs seem to be public data, or commercially available (ESRI)

- If the code were provided, others should be able to reproduce the analysis

Opening up technical possibilities

How can we know that a data source is reliably obtained?

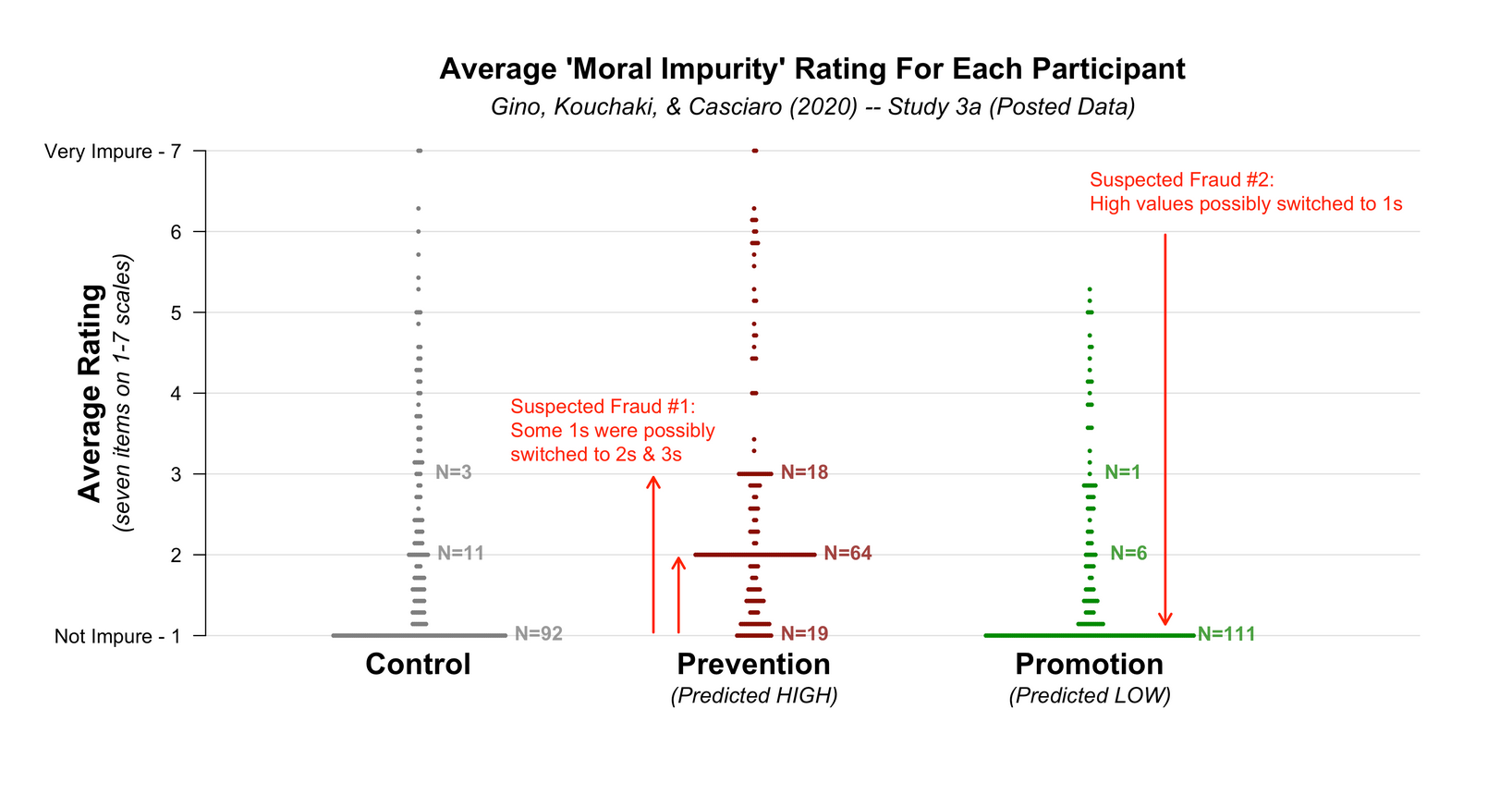

Consider the case of Gino

Francesca Gino

The case of Gino

- Francesca Gino was a tenured professor at Harvard Business School, writing on honesty (!)

The case of Gino

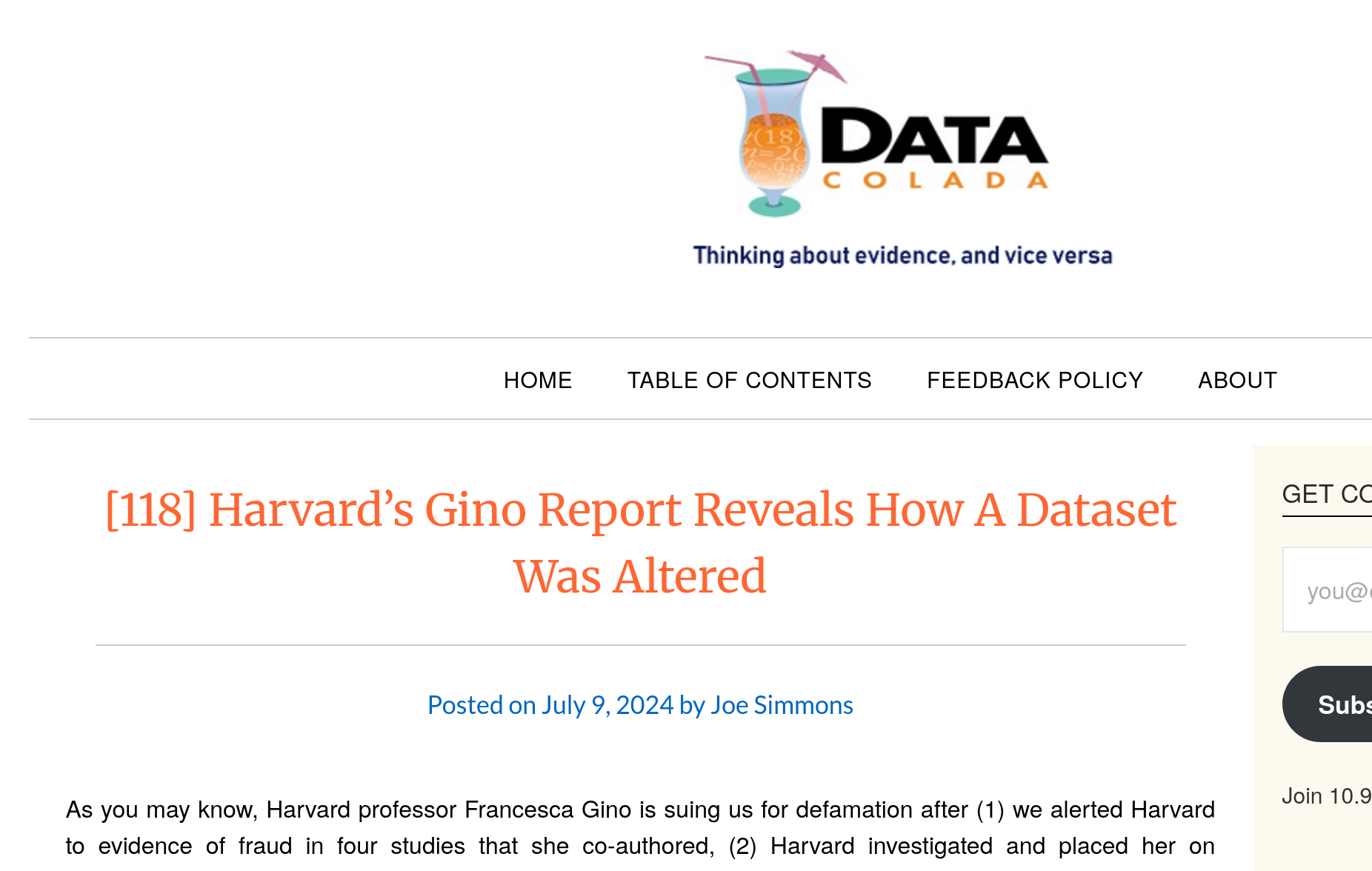

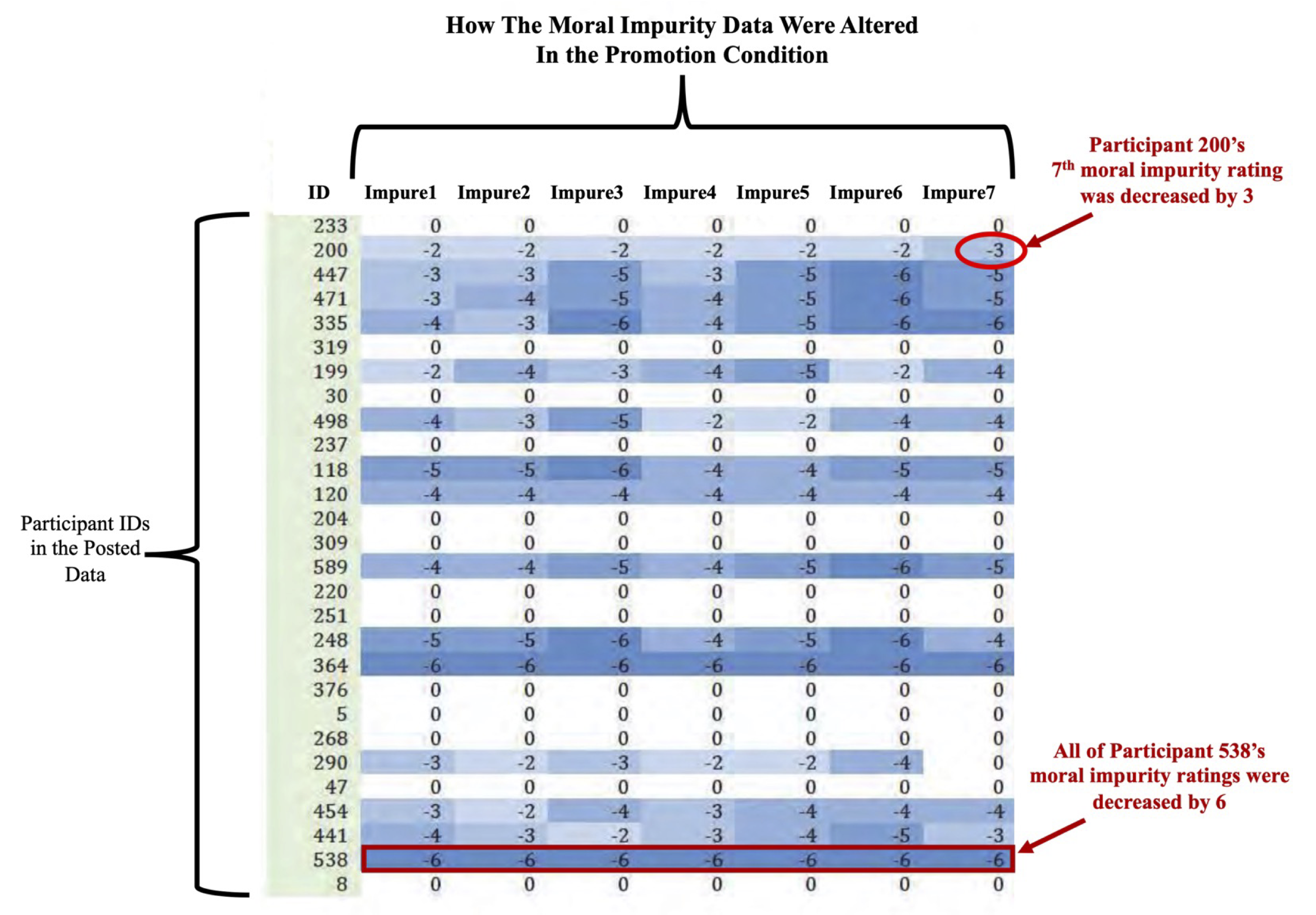

- Several articles were investigated by third parties (Data Colada, in particular 9), and found to be problematic

The case of Gino

- At least one of them had manipulated data AFTER it had been collected, BEFORE it had been analyzed.

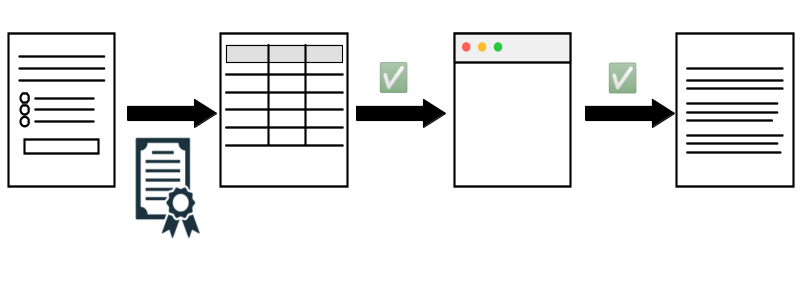

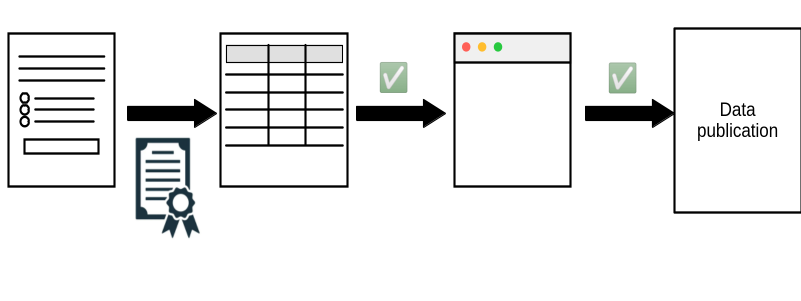

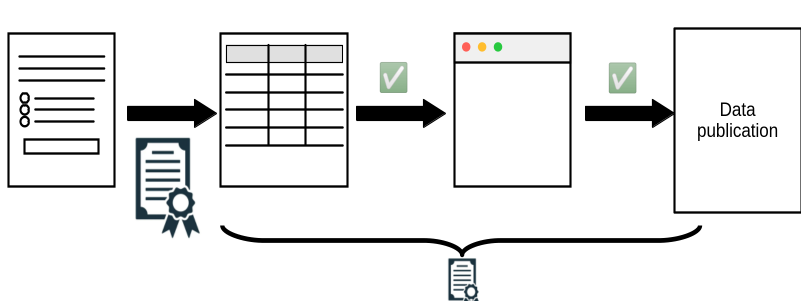

Generic survey processing

Generic survey processing

Requiring transparency in academia

Verifying transparency in academia

Verification by journals

- Provision (publication of materials) provides transparency

- Verification (running the analysis again - computational reproducibility) compensates for mistrust/absence of trust

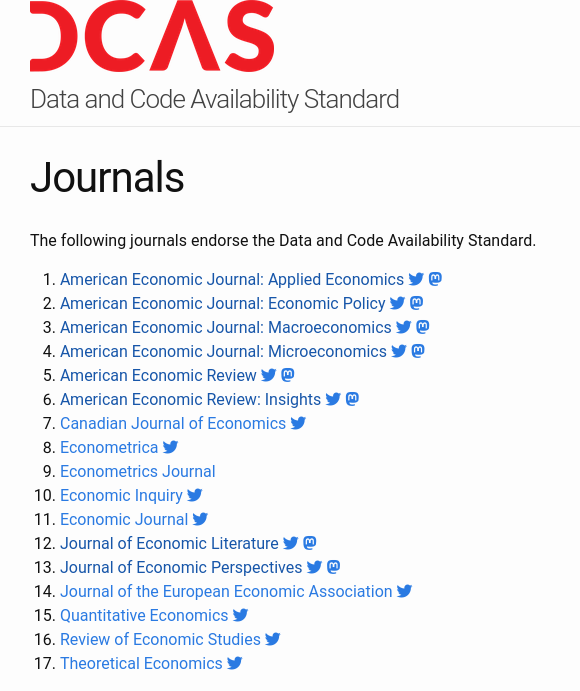

Which journals again

- American Economic Association (8)

- Econometric Society (3)

- Canadian Journal of Economics (1)

- Royal Economic Society (2)

- Western Economic Association International (1)

- European Economic Association (1)

- Review of Economic Studies (1)

- Journal of the European Economic Association (1)

- Journal of Political Economy (3)

- American Journal of Political Science (1)

- American Political Science Review (1)

Verification by others

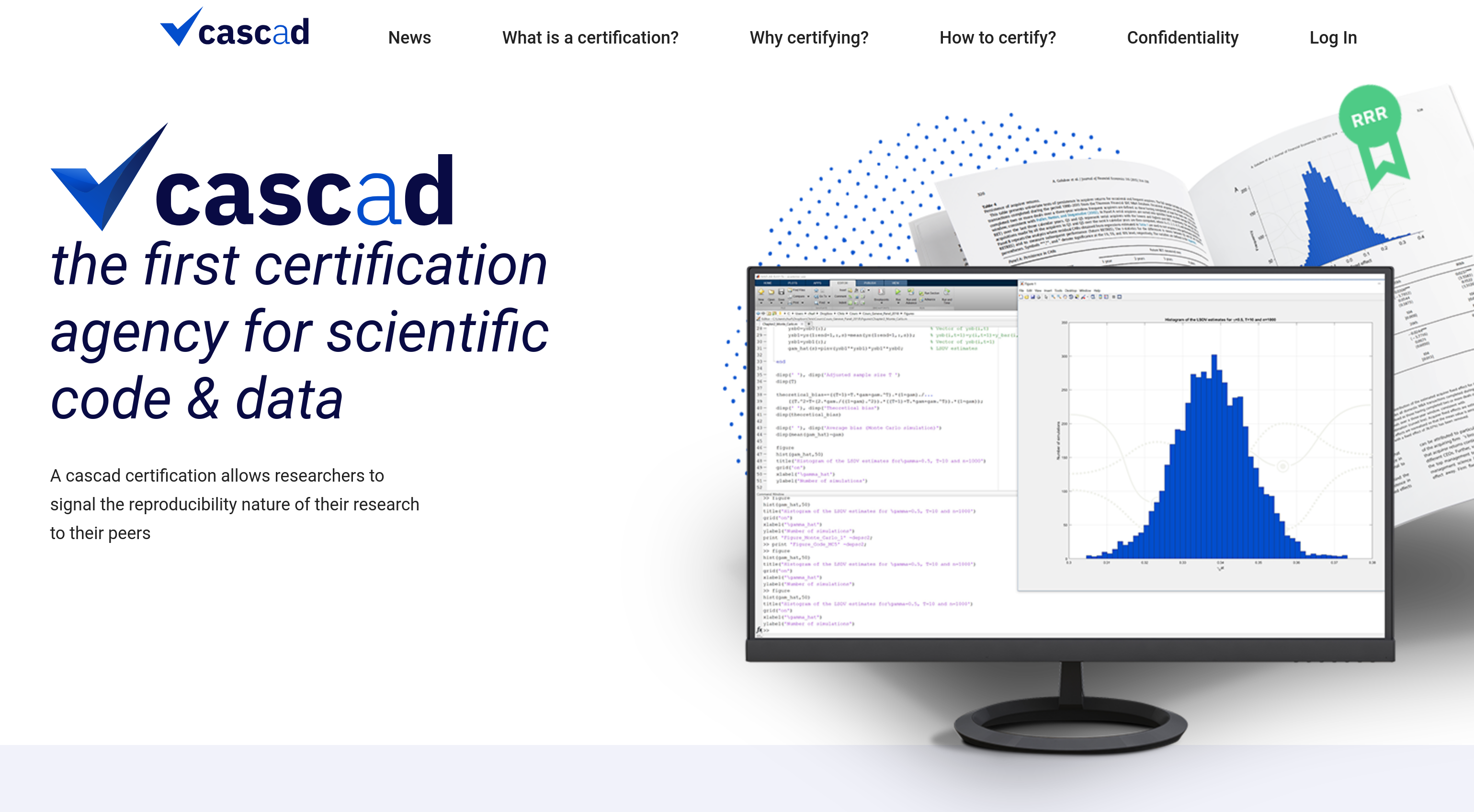

- Pre-publication: cascad

- Post-publication: Data Colada, Institute for Replication

Verification by institutions

Taking it a step further

Taking it a step further

- Has been discussed by authors behind Data Colada

- Survey tool provider (Qualtrics, etc.) exports data, posts checksum

- Survey tool provider exports data only to institution directly into trusted repository, researchers obtain data from there (with privacy protections)

Does not prevent all fraud

Toronto researcher loses Ph.D.

MIT student makes up firm data

Can Assurances be created for Statistical Agencies?

Challenges for statistical agencies

- Documenting full transparency is hard

- Complex and legacy survey tools make the process harder

- Presence of (legitimate!) manual edits is an issue

- Production processes are long and complex

- Much of the code base is not open source

How to document the full process?

A sketch: Transparency Certified

Work in progress

Wrapping it all up

What is the state of reproducibility and transparency in academic economics?

- An increasing number of journals are not just requiring complete data, code, and transparent description, but also verifying that the code and data are correct.

- At the AEA: since 2019, reviewed around 3000 articles, ran code for about 2/3 of them.

What are the benefits of reproducibility and transparency?

- Greater trust in the results

- Greater ease of building on results

- Greater transparency of the process, but also of the provenance

Increasing broad consensus in academia

- FAIR principles

- Data Citation Principles

- Computational Reproducibility

What are the implications for statistical agencies?

Producers of statistical products

- May want to provide greater transparency into the production process.

- May need to do more for long-term, unbiased preservation of input data, output products, and code/software to link the two.

- May want to start with the low-hanging fruit: dashboards and fully public processes.

Coherence with stated principles

The emerging consensus is fully in line with the decades-strong principles of statistical agencies:

Greater trust by the public?

- Transparency should be correlated with greater trust in the work of the statistical agencies

- But: Transparency can also lead to vulnerability through misinterpretation (no panacea)

Thank you

One more thing…

That confidential code thing…

- IRS variable names

- File paths b/c your IT department said so

- Use of confidential data in code (

if name="Lars" then confid=2)

Solution

Don’t do that.

Solution

Appendix

Secrets in the code

What are secrets?

- API keys

- Login credentials for data access

- File paths (FSRDC!)

- Variable names (IRS!)

Standard practice

Store secrets in environment variables or files that are not published.

Some services are serious about this

Github secret scanning

Where to store secrets

- environment variables

- “dot-env” files (Python), “Renviron” files (R)

- or some other clearly identified file in the project or home directory

Environment variables

Typed interactively (here for Linux and Mac)

(this is not recommended)

Storing these in files

Same syntax used for contents of “dot-env” or “Renviron” files, and in fact bash or zsh startup files (.bash_profile, .zshrc)

Using In R

Edit .Renviron (note the dot!) files:

Use the variables defined in .Renviron:

Using In Python

Loading regular environment variables:

Loading with dotenv

Using in Stata

Yes, this also works in Stata

and via (what else) a user-written package for loading from files:

Simplest solution

//============ non-confidential parameters =========

include "config.do"

//============ confidential parameters =============

capture confirm file "$code/confidential/confparms.do"

if _rc == 0 {

// file exists

include "$code/confidential/confparms.do"

} else {

di in red "No confidential parameters found"

}

//============ end confidential parameters =========Confidential code?

What is confidential code, you say?

- In the United States, some variables on IRS databases are considered super-top-secret. So you can’t name that-variable-that-you-filled-out-on-your-Form-1040 in your analysis code of same data. (They are often referred to in jargon as “Title 26 variables”).

What is confidential code, you say?

- Your code contains the random seed you used to anonymize the sensitive identifiers. This might allow to reverse-engineer the anonymization, and is not a good idea to publish.

What is confidential code, you say?

- You used a look-up table hard-coded in your Stata code to anonymize the sensitive identifiers (

replace anoncounty=1 if county="Tompkins, NY").

A really bad idea, but yes, you probably want to hide that.

What is confidential code, you say?

- Your IT specialist or disclosure officer thinks publishing the exact path to your copy of the confidential 2010 Census data, e.g., “/data/census/2010”, is a security risk and refuses to let that code through.

What is confidential code, you say?

- You have adhered to disclosure rules, but for some reason, the precise minimum cell size is a confidential parameter.

What is confidential code, you say?

So whether reasonable or not, this is an issue. How do you do that, without messing up the code, or spending hours redacting your code?

Example

- This will serve as an example. None of this is specific to Stata, and the solutions for R, Python, Julia, Matlab, etc. are all quite similar.

- Assume that variables

q2fandq3eare considered confidential by some rule, and that the minimum cell size10is also confidential.

Example

Only one line that does not contain “confidential” information.

Do not do this

A bad example, because literally making more work for you and for future replicators, is to manually redact the confidential information with text that is not legitimate code:

The redacted program above will no longer run, and will be very tedious to un-redact if a subsequent replicator obtains legitimate access to the confidential data.

Better

Simply replacing the confidential data with replacement that are valid placeholders in the programming language of your choice is already better. Here’s the confidential version of the file:

//============ confidential parameters =============

global confseed 12345

global confpath "/data/economic/cmf2012"

global confprofit q2f

global confemploy q3e

global confmincell 10

//============ end confidential parameters =========

set seed $confseed

use $confprofit county using "${confpath}/extract.dta", clear

gen logprofit = log($confprofit)

by county: collapse (count) n=$confemploy (mean) logprofit

drop if n<$confmincell

graph twoway n logprofitBetter

and this could be the released file, part of the replication package:

//============ confidential parameters =============

global confseed XXXX // a number

global confpath "XXXX" // a path that will be communicated to you

global confprofit XXX // Variable name for profit T26

global confemploy XXX // Variable name for employment T26

global confmincell XXX // a number

//============ end confidential parameters =========

set seed $confseed

use $confprofit county using "${confpath}/extract.dta", clear

gen logprofit = log($confprofit)

by county: collapse (count) n=$confemploy (mean) logprofit

drop if n<$confmincell

graph twoway n logprofitWhile the code won’t run as-is, it is easy to un-redact, regardless of how many times you reference the confidential values, e.g., q2f, anywhere in the code.

Best

- Main file

- Conditional processing

- Separate file for confidential parameters which can simply be excluded from disclosure request

Best

Main file main.do:

//============ confidential parameters =============

capture confirm file "$code/confidential/confparms.do"

if _rc == 0 {

// file exists

include "$code/confidential/confparms.do""

} else {

di in red "No confidential parameters found"

}

//============ end confidential parameters =========

//============ non-confidential parameters =========

global safepath "$rootdir/releasable"

cap mkdir "$safepath"

//============ end parameters ======================Best

Main file main.do (continued)

// :::: Process only if confidential data is present

capture confirm file "${confpath}/extract.dta"

if _rc == 0 {

set seed $confseed

use $confprofit county using "${confpath}/extract.dta", clear

gen logprofit = log($confprofit)

by county: collapse (count) n=$confemploy (mean) logprofit

drop if n<$confmincell

save "${safepath}/figure1.dta", replace

} else { di in red "Skipping processing of confidential data" }

//============ at this point, the data is releasable ======

// :::: Process always

use "${safepath}/figure1.dta", clear

graph twoway n logprofit

graph export "${safepath}/figure1.pdf", replaceBest

Auxiliary file $code/confidential/confparms.do" (not released)

Best

Auxiliary file $code/include/confparms_template.do (this is released)

//============ confidential parameters =============

// Copy this file to $code/confidential/confparms.do and edit

global confseed XXXX // a number

global confpath "XXXX" // a path that will be communicated to you

global confprofit XXX // Variable name for profit T26

global confemploy XXX // Variable name for employment T26

global confmincell XXX // a number

//============ end confidential parameters =========Best replication package

Thus, the replication package would have:

Keeping on top of provenance

- Licenses

- Streamlining for reproducibility

Licenses

Where does the file come from?

- How can we describe this later to somebody?

- Point and click is long to describe

- What are the rights we have?

What is a license?

A license (licence) is an official permission or permit to do, use, or own something (as well as the document of that permission or permit).11 12

Examples

- Creative Commons licenses, used for artistic products and data

- Open Source licenses (BSD, GPL, MIT, etc.), used for software (code)

License applying to Geodist data

- CEPII GeoDist is under an “Etalab 2.0 license”

Can we re-publish the file?

Downloading via code

Easiest:

Stata

Why not?

- will it be there in two months? in 6 years?

- what if the internet connection is down?

Easy:

Stata

R

We will get to even better methods a bit later

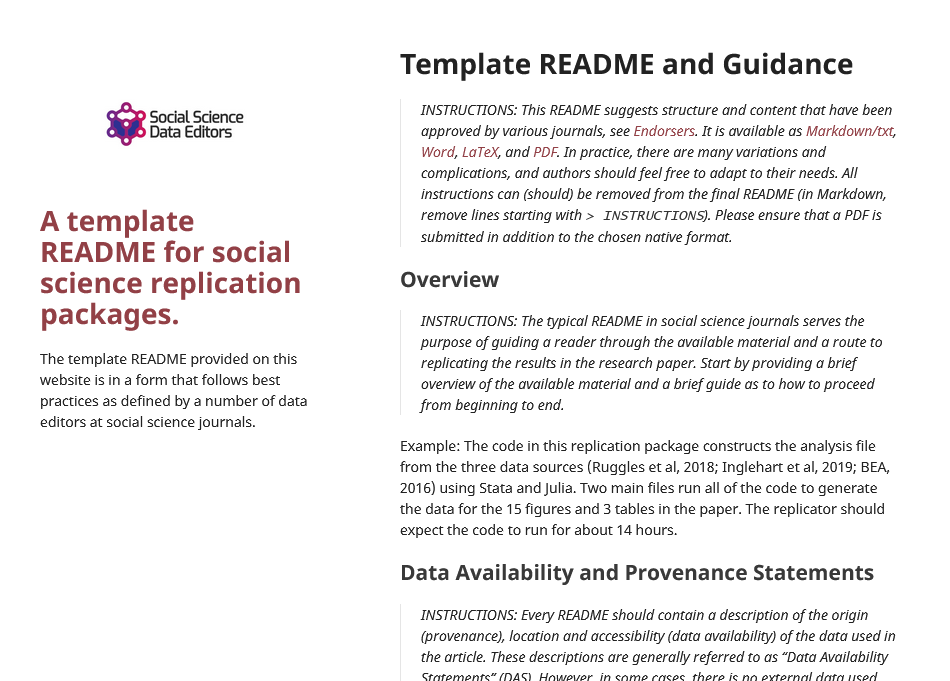

Creating a README

- Template README

- Cite both dataset and working paper

- Add data URL and time accessed (can you think of a way to automate this?)

- Add a link to license (also: download and store the license)

Link

Wrapping it all up

Wrapping up

- Public replication package contains intelligible code, omits confidential details (but provides template code), has detailed data provenance statements

- Confidential replication package contains all the same, plus the confidential code, is archived in the FSRDC

Things to remember

- Use code to save figures and tables (

estout,graph export,regsave) - Create log files for each run (

stata -b do file.donot fine-grained enough) link

Things to remember

Run it all again, top to bottom!

Things to remember

- When doing a disclosure review request, remember to request the code

- When outputting statistics, consider the disclosure rules - the less changes, the faster the output (in theory), but in particular fewer surprises

- Do not think “nobody will ever read this code” - somebody is very likely to!

End

Now you wait for the replicators to show up!

Footnotes

Bollen et al. 2015. “Social, Behavioral, and Economic Sciences Perspectives on Robust and Reliable Science.” National Science Foundation. https://www.nsf.gov/sbe/AC_Materials/SBE_Robust_and_Reliable_Research_Report.pdf.

Ambrus, Attila, Erica Field, and Robert Gonzalez. 2020. “Loss in the Time of Cholera: Long-Run Impact of a Disease Epidemic on the Urban Landscape.” American Economic Review, 110 (2): 475–525. https://doi.org/10.1257/aer.20190759

Ambrus, Attila, Field, Erica, and Gonzalez, Robert. Data and Code for: Loss in the Time of Cholera: Long-run Impact of a Disease Epidemic on the Urban Landscape. Nashville, TN: American Economic Association [publisher], 2020. Ann Arbor, MI: Inter-university Consortium for Political and Social Research [distributor], 2020-01-31. https://doi.org/10.3886/E111523V2

Weeden, K. A. (2023). Crisis? What Crisis? Sociology’s Slow Progress Toward Scientific Transparency . Harvard Data Science Review, 5(4). https://doi.org/10.1162/99608f92.151c41e3

United Nations Fundamental Principles of Official Statistics

National Academies of Sciences, Engineering, and Medicine. 2017. Principles and Practices for a Federal Statistical Agency: Sixth Edition. Washington, DC: The National Academies Press. https://doi.org/10.17226/24810.

Data Citation Synthesis Group: Joint Declaration of Data Citation Principles. Martone M. (ed.) San Diego CA: FORCE11; 2014 https://www.force11.org/group/joint-declaration-data-citation-principles-final

https://ers.usda.gov/data-products/area-and-road-ruggedness-scales/

https://datacolada.org/109, https://datacolada.org/110, https://datacolada.org/111, https://datacolada.org/112, https://datacolada.org/114, https://datacolada.org/118

Jones, M. (2024). Introducing Reproducible Research Standards at the World Bank. Harvard Data Science Review, 6(4). https://doi.org/10.1162/99608f92.21328ce3