1. Job loss due to automation in lower-skille

2. AI-driven wealth concentration in tech-sav

3. Digital literacy gap leading to economic d

4. Lack of universal access to AI technology.

5. AI-driven bias in hiring and selection pro

6. Imbalance in job market due to AI speciali

7. Data privacy issues affecting vulnerable p

8. AI-driven services predominantly targeting

9. Algorithms exacerbating social inequality

10. Inclusive AI product development lacking.

11. Higher prices due to AI-enhanced products

12. AI-fueled gentrification in tech-centered

13. Anticompetitive practices bolstered by AI

14. Lack of labor rights for jobs displaced b

15. Educational imbalance due to AI-learning

16. AI in healthcare excluding lower socioeco

17. Disproportionate influence of AI in polit

18. Undervaluing of human skills in favor of

19. Biased AI systems perpetuating discrimina

20. AI reinforcing societal hierarchies via dReproducibility in an AI World

2026-05-01

Who am I?

Lars Vilhuber

Economist at Cornell University, and the American Economic Association’s Data Editor.

Data Editor of the AEA

~3000 Manuscripts and 4500 Reports, approx. 4800 authors reached.

Concerns voiced

AI introduces massive challenges for reproducibility.

Thesis

None of this is AI-specific, but the difficulties may be magnified when using LLMs in research.

Counterpoint

Not unlike difficulties researchers face with

- any black-box systems

- existing commercial software

- external APIs of any kind

Computational reproducibility

In this talk, we focus on computational reproducibility, though the ultimate goal remains replicability.

LLM vs. AI

I will distinguish LLM (large language models) from AI (artificial intelligence):

- LLM: models that are trained for a specific (possibly broad) purpose

- AI: online systems that use LLMs, such as GPT, Claude, etc.

Reproducibility may be hard but important

The Problem

Computational Reproducibility in the Social Sciences

- Research communities increasingly require sharing of data, code, and methods

- Trend is clear: any data that can reasonably be shared, and all code, should be made available

- Consumers combine data and code, re-run it, and validate the analysis

Computational Reproducibility in the Social Sciences

But what makes this hard in practice?

- Data withheld for ethical, legal, or contractual reasons

Computational Reproducibility in the Social Sciences

But what makes this hard in practice?

- Data withheld for ethical, legal, or contractual reasons

- Processing is time-consuming or requires rare computing resources

Computational Reproducibility in the Social Sciences

But what makes this hard in practice?

- Data withheld for ethical, legal, or contractual reasons

- Processing is time-consuming or requires rare computing resources

- Raw data may be transient, ephemeral, or deleted

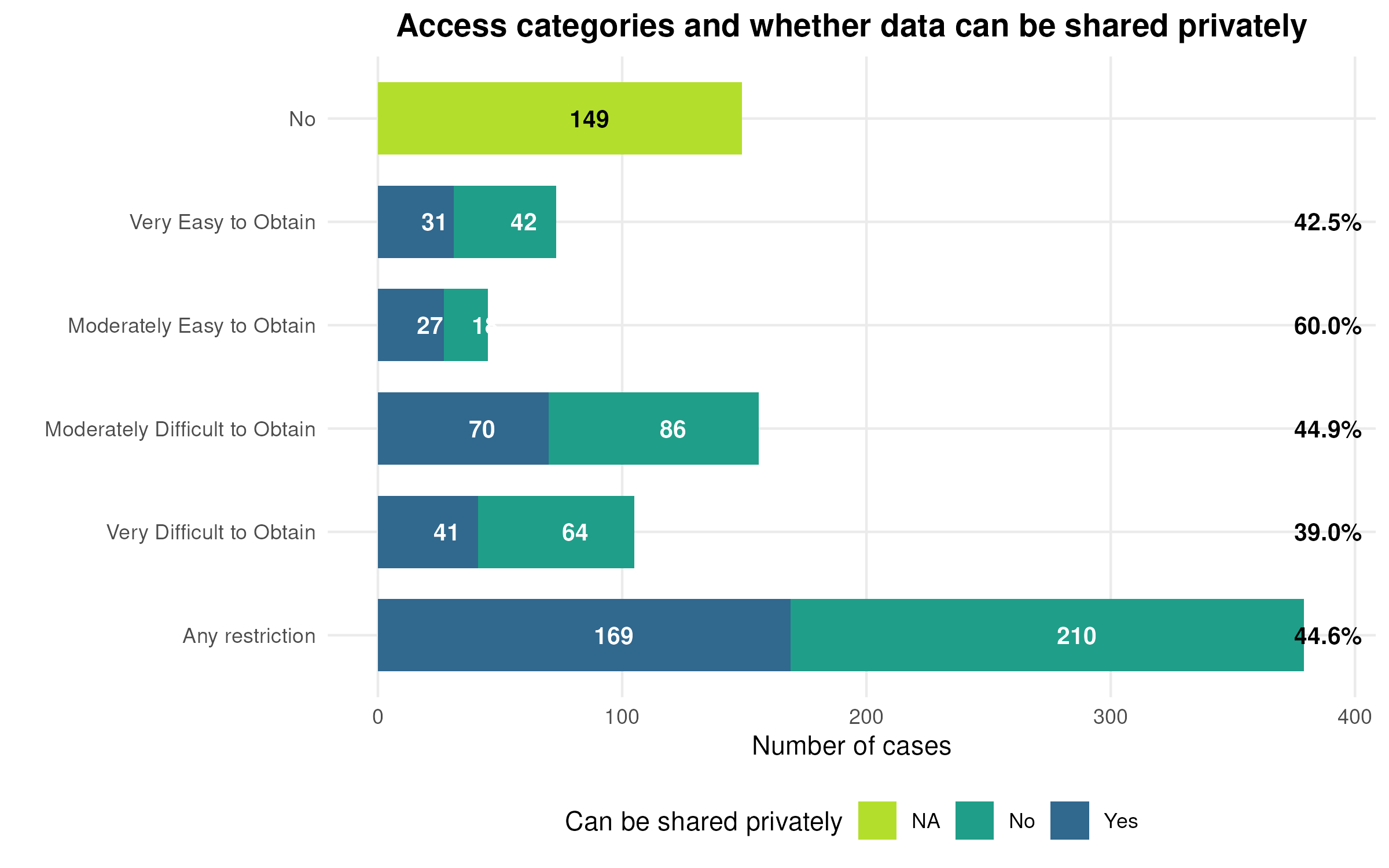

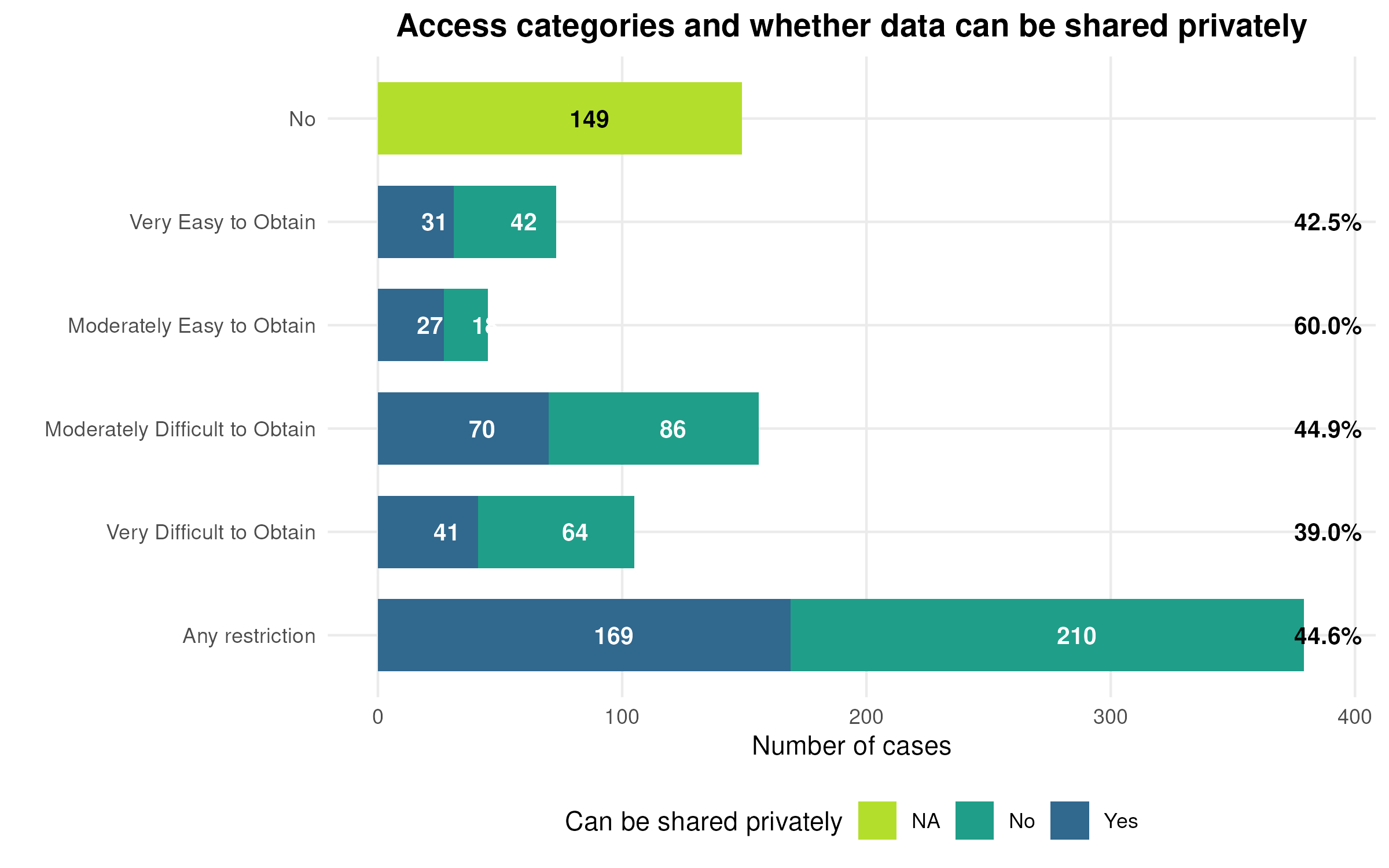

Inaccessibility is normal

From the AEA Data Editor’s experience (2025, 384 papers assessed):

- 38% used data with no access restrictions — in scope for replication studies

- 62% used data subject to access restrictions

… but solvable

Of those restricted papers:

- The AEA team obtained private access to 45% of the 62%

- Conducted reproducibility checks despite data not being in public packages

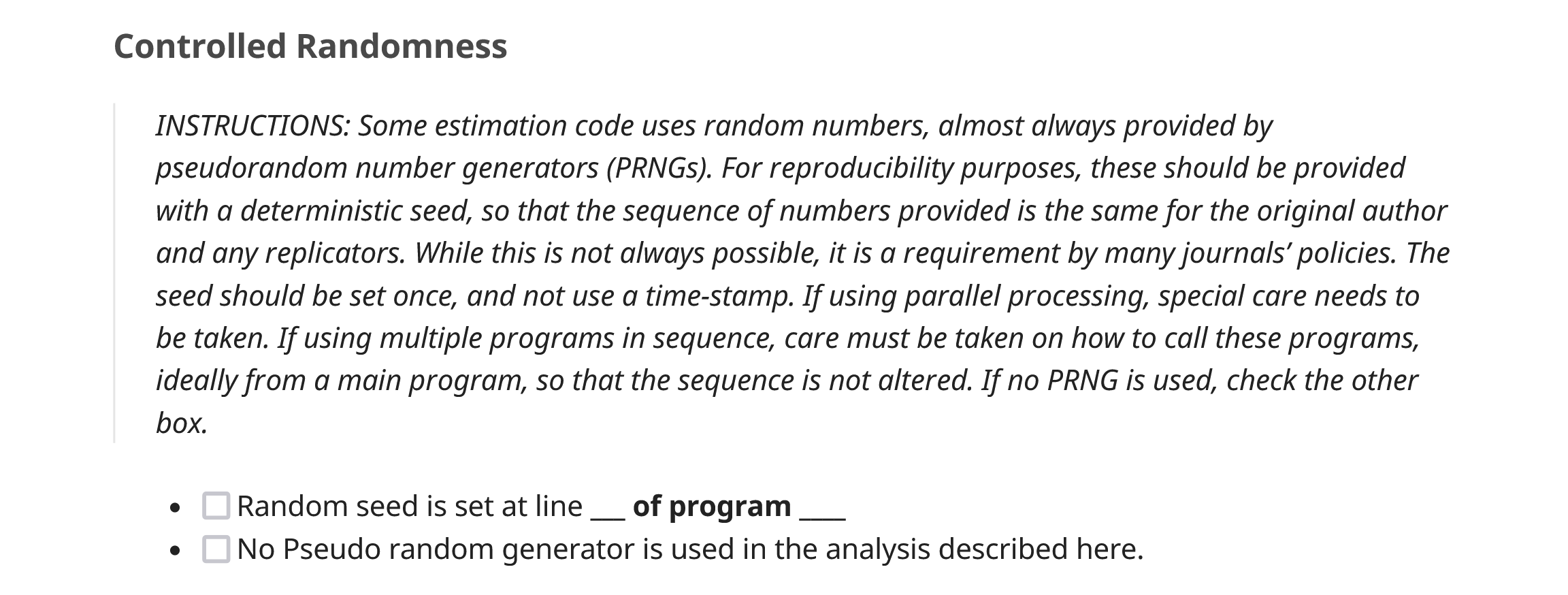

Transparency Targets

We want to check that

Tools and Standards

In Economics,

- Use the Template README (presentation)

- Describe data provenance and access conditions for all raw data

- Describe all data transformations starting with raw data

- Provide all code, including for data you cannot share

“Our project involves 60,000 PDFs … categorize using LLMs”

Let’s talk about data

Types of Data

- Data used for training

- Data used for analysis

- Data output by the algorithm

Questions for Data

- Where did the (training/analysis) data come from?

- Can you share it?

- Can others obtain access?

- Is it still there?

- Where did you put the analysis data?

- Can you share it?

- If not, why not?

- Can you preserve it?

Guidance in README

Data Provenance

Are models data or software? - will treat as software here.

Data Provenance

- Are the source data preserved?

- Often large text archives

- Format relevant: physical or electronic copy?

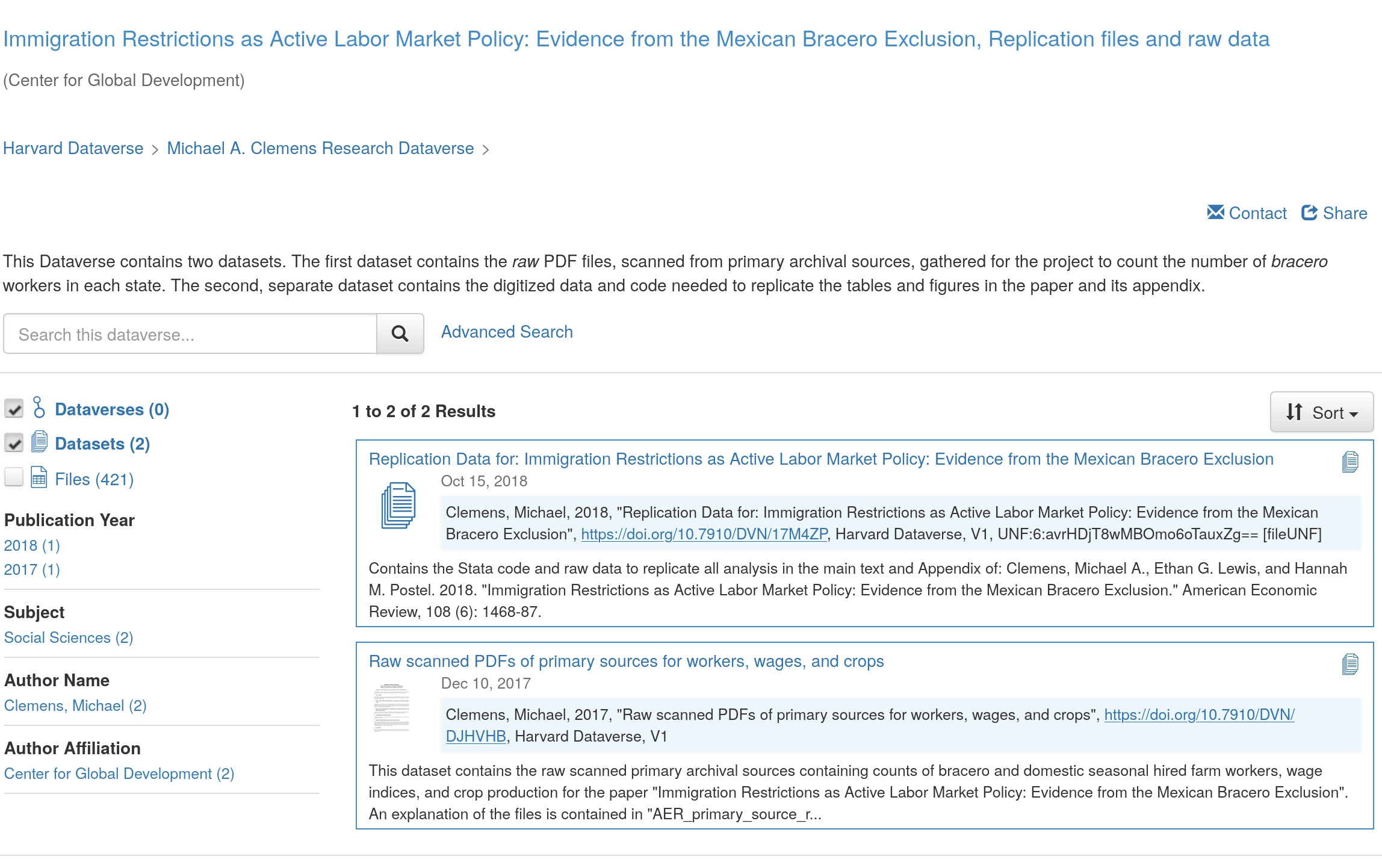

Good example

“Immigration Restrictions as Active Labor Market Policy: Evidence from the Mexican Bracero Exclusion, Replication files and raw data” (Michael Clemens)

- Hosted on Harvard Dataverse at https://dataverse.harvard.edu/dataverse/bracero

Raw data

Clemens, Michael, 2017, “Raw scanned PDFs of primary sources for workers, wages, and crops”, https://doi.org/10.7910/DVN/DJHVHB, Harvard Dataverse, V1

Your analysis data

Probably requires

- substantial computing resources (time, cost, space)

- lesser storage resources

Good example

Clemens, Michael, 2018, “Replication Data for: Immigration Restrictions as Active Labor Market Policy: Evidence from the Mexican Bracero Exclusion”, https://doi.org/10.7910/DVN/17M4ZP, Harvard Dataverse, V1

LLM-specific considerations

Generically,

pre-trained LLM ▶️ tuned LLM ▶️ analysis data

LLM-specific considerations

tuned LLM= f(training data\(D^t\),pre-trained LLM)analysis data\(\widetilde{D}\)= f(tuned LLM,raw data\(D^*\))

Both should be preserved

LLM-specific considerations

- size?

- where?

LLM-specific considerations

tuned LLM:

- can you release it? (privacy)

- does Hugging Face have a preservation policy? (no)

- license to apply to it?

LLM-specific considerations

analysis data \(\widetilde{D}\):

- can be preserved as part of the replication package

- Clemens example

LLM-specific considerations

analysis data \(\widetilde{D}\):

- could be preserved separately, if multi-purpose

- example:

Census Linking Project

- example:

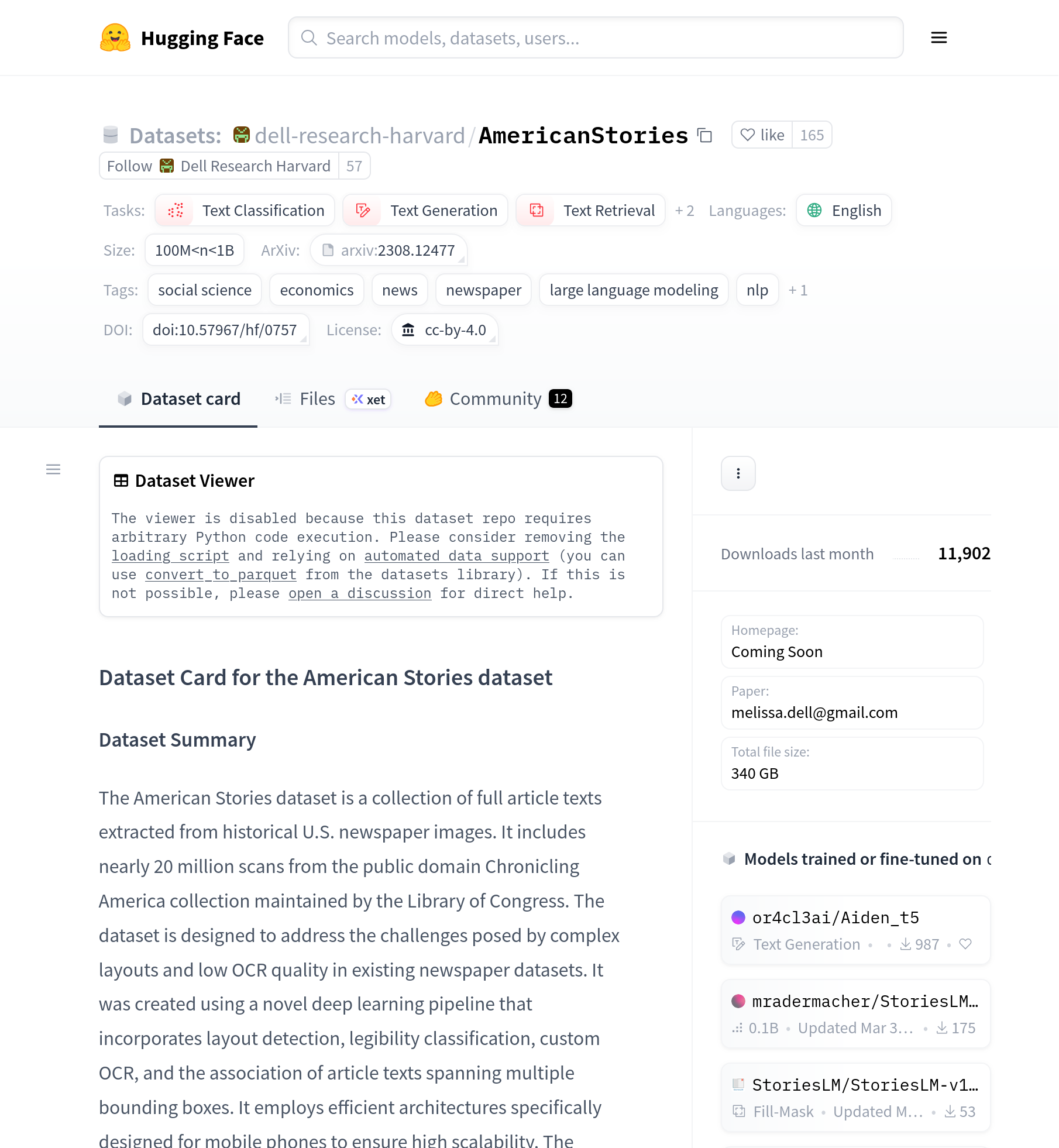

LLM-specific considerations

analysis data \(\widetilde{D}\):

- could be preserved separately, if multi-purpose

- example: Dell’s “American Stories” LLM (Dell 2023)

Computing Requirements

Guidance in README

Computational Environment

Describe what YOU used

- relevant hardware, own and rented (CPU/GPU, memory, storage)

- necessary software, including library versions (Python, R, whatever)

Computational Environment

BUT:

- do not list what your computer has installed - list what you used for this project

- (

pip freezewithout an environment will be too much!)

Computational Environment

Describe what the REPLICATOR needs to have or install

- may require that some library is left “loose”

- should be as abstract as necessary (is Linux necessary, convenient, or irrelevant?)

None of this is AI specific!

None of this is AI-specific, but the difficulties may be magnified when using LLMs in research.

LLM-specific considerations

- But LLMs may have some stricter-than-usual operating conditions

- Most economists don’t need dual Nvidia H100 for their computing…

Be as specific as necessary, but no more!

Commercial Software

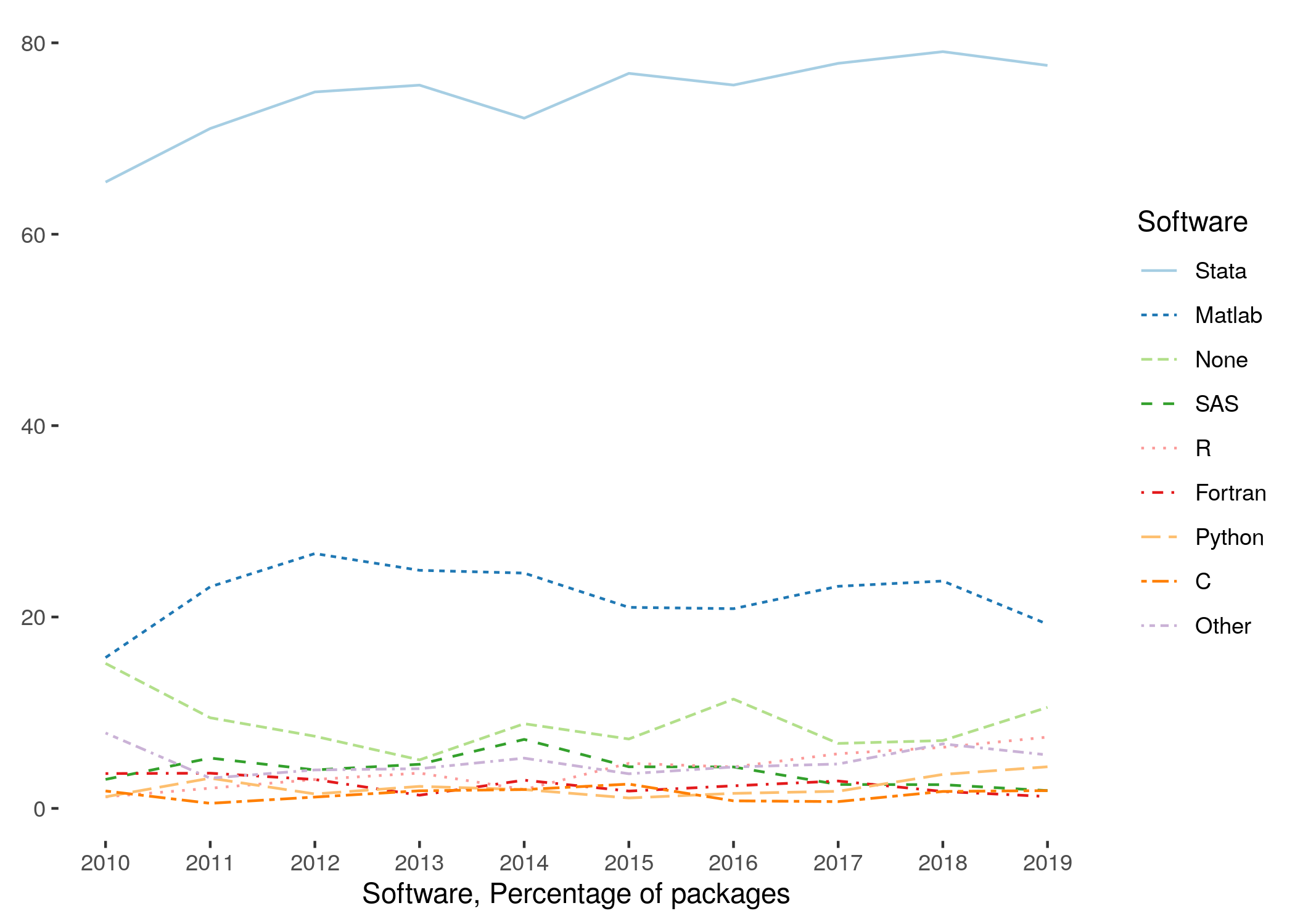

Software used by economists

Software with access limitations

- Most economists use commercial software

- Most use the same software, so access is not as constraining as it might seem at first blush

- Commercial software can go away (or licenses expire) (but…)

Software without access limitations

- In principle, much open-source software is more robustly archived

- all older R versions and packages on CRAN!

- But points of failure for others (PyPi, NPM, etc.)

LLM-specific considerations

Reminder

pre-trained LLM ▶️ tuned LLM ▶️ analysis data

Where do the pre-trained LLMs roost?

“Community”?

In August 2023, the company announced that it raised $235 million in a Series D funding, at a $4.5 billion valuation. The funding was led by Salesforce, and notable participation came from Google, Amazon, Nvidia, AMD, Intel, IBM, and Qualcomm.1

But…

- Hugging Face does assign DOIs to some models

- Presumption of some preservation (no guarantees)

AI-specific considerations

- OpenAI released GPT-2 in 2019 as a non-profit. See (Openai-Community/Gpt2 \(\cdot\) Hugging Face, n.d.).

- But: GPT-3 was not released after OpenAI transitioned to become commercial.

- Specific versions of models may not be available after some time, at the discretion of the provider.

This is less of an issue for OS LLMs (maybe)

- Example:

Llama-3 - But: no DOI. Could be withdrawn?

LLM-specific considerations

- Document what

softwareyou are running (API, model name, version) - Document what

hardwareyou are running on- local: CPU/GPU, memory, storage

- rented: cloud provider, instance type, etc.

- API is just a rented hardware environment

- Cost…

Run it all again

The very first test is that your code must run, beginning to end, top to bottom, without error, and ideally without any user intervention. This should in principle (re)create all figures, tables, and numbers you include in your paper.

TL;DR

This is pretty much the most basic test of reproducibility.

This has nothing to do with LLM/AI!

If you cannot run your code, you cannot reproduce your results, nor can anybody else. So just re-run the code.

Exceptions

Code runs for a very long time

What happens when some of these re-runs are very long? See later in this chapter for how to handle this.

Making the code run takes YOU a very long time

While the code, once set to run, can do so on its own, you might need to spend a lot of time getting all the various pieces to run.

This should be a warning sign:

If it takes you a long time to get it to run, or to manually reproduce the results, it might take others even longer.2

Furthermore, it may suggest that you haven’t been able to re-run your own code very often, which can be indicate fragility or even lack of reproducibility.

Takeaways

LLM specificity of runtime variability

Inherent variability

- LLMs are probabilistic by design, so some variability is expected

- “Temperature” is meant to control this, but imperfect

Is your result robust?

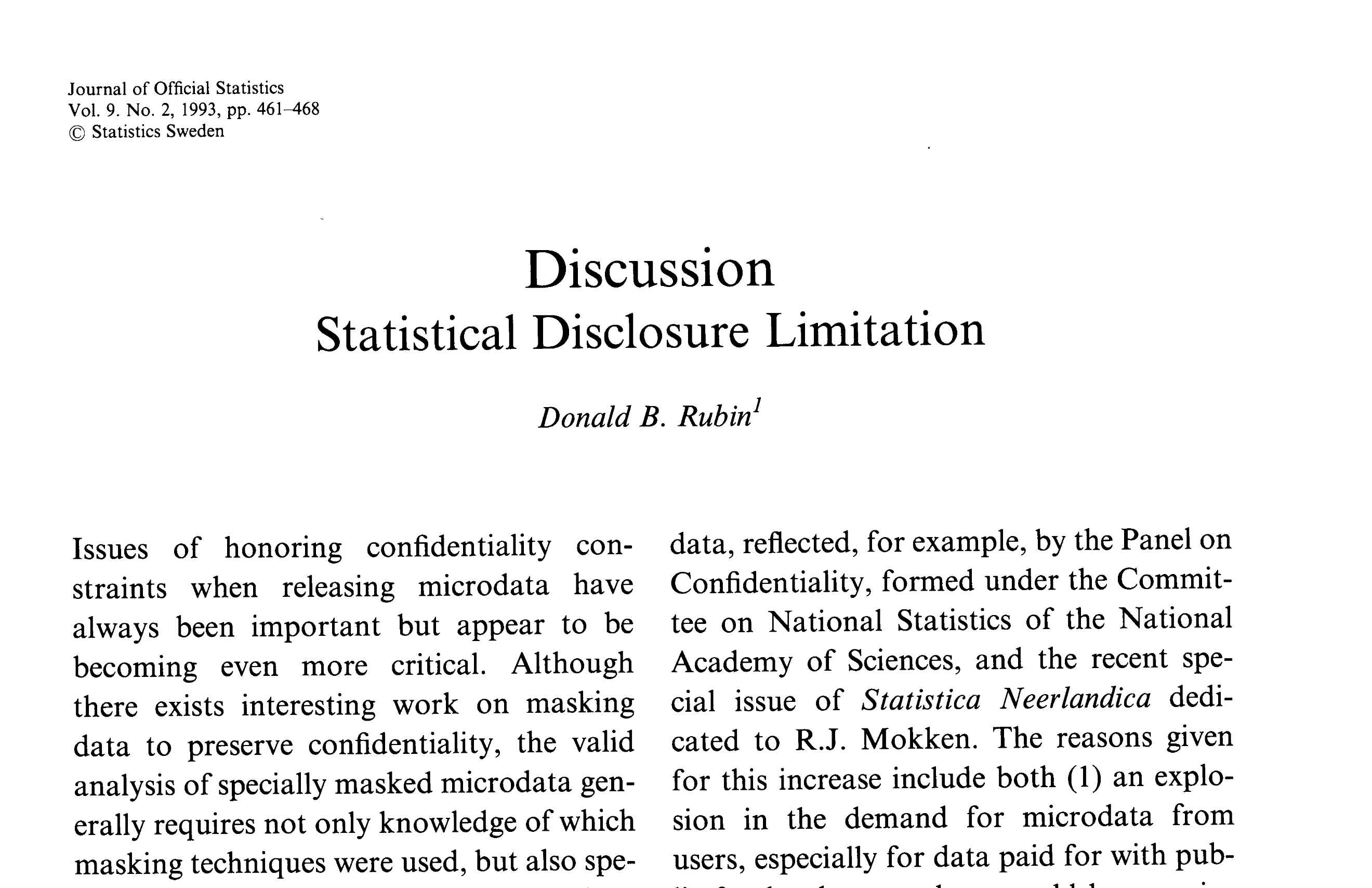

LLM output as a Multiple Imputation problem

- (Rubin 1993) is credited with one of the first formalizations of multiple imputation

- Often used for privacy protection, but also missing data

- See Reiter (2005) for inference rules

LLM output as a Multiple Imputation problem

Recommendation

- Run the LLM (query) multiple times (e.g., 10 times) -> \(D^*_m, m=1,...,10\)

LLM output as a Multiple Imputation problem

Recommendation

- Run the downstream analysis for each \(D^*_m\) -> \({q}_m, v_m, m=1,...,10\)

LLM output as a Multiple Imputation problem

Recommendation

- Use multiple imputation rules (Rubin, Reiter, etc.) to report

- sampling variability inherent in the underlying data \(D^*\): \(\bar{v}\)

- variability due to the variability in the LLM output \(b\)

LLM-specific considerations

- Be precise about all the parameters

- Also store outputs from API (but: privacy concerns are real!)

- Document the variability in the output (e.g., by running multiple times)

Why is this not enough?

Does your code run without manual intervention?

Automation and robustness checks, as well as efficiency.

Can you provide evidence that you ran it?

Generating a log file means that you can inspect it, and you can share it with others. Also helps in debugging, for you and others.

Will it run on somebody else’s computer?

Running it again does not help:

- because it does not guarantee that somebody else has all the software (including packages!)

- because it does not guarantee that all the directories for input or output are there

- because many intermediate files might be present that are not in the replication package

- because you might have run things out of sequence, or relied on previously generated files in ways that won’t work for others

- because some outputs might be present from test runs, but actually fail in this run

Hands-off running: Creating a controller script

Your code must run, beginning to end, top to bottom, without error, and without any user intervention.

This should in principle (re)create all figures, tables, and in-text numbers you include in your paper.

Seem trivial?

Out of 8280 replication packages in ~20 top econ journals, only 2594 (31.33%) had a main/controller script.3

TL;DR

- Create a “main” file that runs all the other files in the correct order.

- Run this file, without user intervention.

- It should run without error.

Creating a main or master script

In order to be able to enable “hands-off running”, the main (controller) script is key.

Example 1: Querying Claude.ai

- for the first example, I was lazy - I typed the prompt into the Claude.ai website.

- Can you repeat it?

- What if I have to repeat it 100 times, with slight variations?

R

Set the root directory (using here() or rprojroot()).

# main.R

## Set the root directory

# If you are using Rproj files or git

rootdir <- here::here()

# or if not

# rootdir <- getwd()

## Run the data preparation file

source(file.path(rootdir, "01_data_prep.R"),

echo = TRUE)

## Run the analysis file

source(file.path(rootdir, "02_analysis.R"),

echo = TRUE)

## Run the table file

source(file.path(rootdir, "03_tables.R"), echo = TRUE)

## Run the figure file

source(file.path(rootdir, "04_figures.R"), echo = TRUE)

## Run the appendix file

source(file.path(rootdir, "05_appendix.R"), echo = TRUE)R

Call each of the component programs, using source().

# main.R

## Set the root directory

# If you are using Rproj files or git

rootdir <- here::here()

# or if not

# rootdir <- getwd()

## Run the data preparation file

source(file.path(rootdir, "01_data_prep.R"),

echo = TRUE)

## Run the analysis file

source(file.path(rootdir, "02_analysis.R"),

echo = TRUE)

## Run the table file

source(file.path(rootdir, "03_tables.R"), echo = TRUE)

## Run the figure file

source(file.path(rootdir, "04_figures.R"), echo = TRUE)

## Run the appendix file

source(file.path(rootdir, "05_appendix.R"), echo = TRUE)Notes for R

The use of echo=TRUE is best, as it will show the code that is being run, and is thus more transparent to you and the future replicator.

Notes for Python

- There are many ways to do this in Python (as there are more in R)

- Defining functions separately, and then calling them in the main file.

- Constructing a package and calling that package.

Notes for Python

If using procedural Python code, might use a bash script:

Caution

What you do should remain transparent to other users!

Caution

Writing a scientific paper is different than writing a useful function on the internet.

You are not writing

mynumpy, you are writing a paper.

… though there are grey areas there.

Takeaways

An example

Korinek (2023)

Existing Replication Package

- Article (Korinek 2023b) (also NBER WP (Korinek 2024))

- Package at (Korinek 2023a)

- Additional materials at https://www.genaiforecon.org/index.html

- I vetted this package!

Created in 2023

- Python based

- README states

Ensure you have the necessary Python libraries installed:

pip install openai pandas numpy

To execute the simplest example, run the script:

python simple_example_chat1.py

The results will be displayed on the screen.Ex-post critique

- Missing a

requirements.txt - No instructions to set an environment

These are now systematically requested for replication packages!

Trying it out

I created a requirements.txt file

(note: created using pipreqs Python package, plus hand-edit).

Trying it out (2)

Create environment

(note: running on Linux, openSUSE, Python 3.11.10)

Trying it out (3)

Get the API key

- Go to https://platform.OpenAI.com

- Go to

API keyson the left side - Verify phone number (a challenge while roaming!)

+ Create new secret key- Save the API key in file

.env

Trying it out (4)

Run the script

Failure!

Attempt to fix

- README speaks of environment variable

- Run again, same error!

Reasons

- Not scripted enough, requires manual intervention!

- Ignores

.envfile (one way of doing it) - Ignores environment variable (another way), despite doing it in another script!

Quick fix

- I fixed the script to read from environment variable.

NEVER RECORD YOUR API KEY IN SCRIPTS!

IMPORTANT

- These are standard Python issues, not AI issues!

- But they are crucial for reproducibility!

Result

Traceback (most recent call last):

File "/path/korinek-2023/simple_example_chat1.py", line 37, in <module>

completion = openai.ChatCompletion.create(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/path/korinek-2023/venv-311/lib64/python3.11/site-packages/openai/lib/_old_api.py", line 39, in __call__

raise APIRemovedInV1(symbol=self._symbol)

openai.lib._old_api.APIRemovedInV1:

You tried to access openai.ChatCompletion, but this is no longer supported in openai>=1.0.0 - see the README at https://github.com/openai/openai-python for the API.

You can run `openai migrate` to automatically upgrade your codebase to use the 1.0.0 interface.

Alternatively, you can pin your installation to the old version, e.g. `pip install openai==0.28`

A detailed migration guide is available here: https://github.com/openai/openai-python/discussions/742THIS IS A STANDARD PYTHON - API ISSUE!

- These are standard API issues, not AI issues!

- APIs change

- Libraries change

- Having latest is not always best.

- But they are crucial for reproducibility!

Provide

requirements.txtand pin versions!

(We will talk later about API issues!)

Next attempt

- Fix

requirements.txt, re-install

> python simple_example_chat1.py

Traceback (most recent call last):

File "/path/korinek-2023/simple_example_chat1.py", line 37, in <module>

completion = openai.ChatCompletion.create(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

<snip>

File "/path/korinek-2023/venv-311/lib64/python3.11/site-packages/openai/api_requestor.py", line 765, in _interpret_response_line

raise self.handle_error_response(

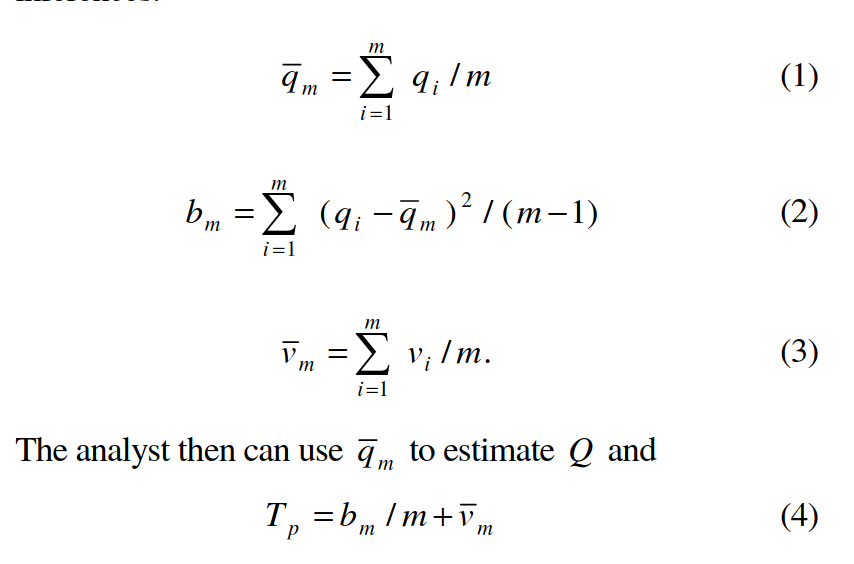

openai.error.InvalidRequestError: The model `gpt-4-0613` does not exist or you do not have access to it.Fixing this

CoPilot response

(note: github.copilot-chat 0.23.1, updated 2024-12-14, 15:31:36)

Turns out…

- I thought I had credits, I did not.

- It was the

you do not have access to itpart that was crucial!

Results

Original content

Content as of 2024-12-14:

1. Job displacement due to automation.

2. Wealth concentration in tech industries.

3. Increased surveillance disproportionately

4. Unequal access to AI technology.

5. AI-driven discrimination in hiring.

6. AI bias in credit scoring.

7. Inequality in AI education and training.

8. AI in healthcare favoring wealthier patien

9. AI-driven gentrification in cities.

10. AI in law enforcement targeting minoritie

11. AI in marketing exploiting vulnerable con

12. AI in politics manipulating voters.

13. AI in insurance favoring privileged group

14. AI in social media amplifying hate speech

15. AI in education favoring affluent student

16. AI in agriculture favoring large-scale fa

17. AI in transportation favoring urban areas

18. AI in retail favoring wealthier consumers

19. AI in entertainment creating cultural div

20. AI in research favoring developed countriNext…

One of the most frequently asked questions…

“but I have confidential data…”

Equivalently

“but I have a big LLM model…”

So what happens when…

You cannot share a file

The file no longer exists on the internet

The code takes ages to run

How can you show that you actually ran the code?

Creating log files

In order to document that you have actually run your code, a log file, a transcript, or some other evidence, may be useful. It may even be required by certain journals.

TL;DR

- Log files are a way to document that you have run your code.

- In particular for code that runs for a very long time, or that uses data that cannot be shared, log files may be the only way to document basic reproducibility.

Overview

- Most statistical software has ways to keep a record that it has run, with the details of that run.

- Some make it easier than others.

- You may need to instruct your code to be “verbose”, or to “log” certain events.

- You may need to use a command-line option to the software to create a log file.

In almost all cased, the generated log files are simple text files, without any formatting, and can be read by any text editor (e.g., Visual Studio Code, Notepad++, etc.).

If not, ensure that they are (avoid Stata SMCL files, for example, or iPython output).

Creating log files explicitly

Generically: see separate tutorial.

Python

Create a wrapper that will capture the calls for any function

from datetime import datetime

def track_calls(func):

def wrapper(*args, **kwargs):

with open('function_log.txt', 'a') as f:

timestamp = datetime.now().strftime('%Y-%m-%d %H:%M:%S')

f.write(f"[{timestamp}] Calling {func.__name__} with args: {args}, kwargs: {kwargs}\n")

result = func(*args, **kwargs)

return result

return wrapper

# Usage

@track_calls

def my_function(x, y,default="TRUE"):

return x + y

my_function(1, 2,default="false")Python

Activate the wrapper

from datetime import datetime

def track_calls(func):

def wrapper(*args, **kwargs):

with open('function_log.txt', 'a') as f:

timestamp = datetime.now().strftime('%Y-%m-%d %H:%M:%S')

f.write(f"[{timestamp}] Calling {func.__name__} with args: {args}, kwargs: {kwargs}\n")

result = func(*args, **kwargs)

return result

return wrapper

# Usage

@track_calls

def my_function(x, y,default="TRUE"):

return x + y

my_function(1, 2,default="false")Python

Creating log files automatically

An alternative (or complement) to creating log files explicitly is to use native functionality of the software to create them. This usually is triggered when using the command line to run the software, and thus may be considered an advanced topic. The examples below are for Linux/macOS, but similar functionality exists for Windows.

Python

In order to capture screen output in Python, on Unix-like system (Linux, macOS), the following can be run:

which will create a log file with everything that would normally appear on the console using the tee command.

Takeaways

Environments

TL;DR

- Search paths and environments are key concepts to create portable, reproducible code, by isolating each project from others.

- Methods exist in all (statistical) programming languages

- For more details, see other guidance

What software supports environments?

- R:

renvpackage - Python:

venvorvirtualenvmodule - Julia:

Pkgmodule

Understanding search paths

Generically, all “environments” simply modify where the specific software searches (the “search path”) for its components, and in particular any supplementary components (packages, libraries, etc.).4

Reproducing and documenting environments in Python

Python allows for pinpointing exact versions of packages in the PyPi repository. This is done by creating a requirements.txt file that lists all the packages that are needed to run your code. In principle, this file can be used by others to recreate the environment you used. The problem is that it might contain TOO many packages, some of which are not relevant, even if you carefully constructed the environment, because it will contain dependencies that are specific to your platform (OS or version of Python).

The issue

will output all the packages installed in your environment. These will include the packages you explicitly installed, but also the packages that were installed as dependencies. Some of those dependencies may be specific to your operating system or environment. In some cases, they contain packages that you needed to develop the code, but that are not needed to run it.

will output all the packages installed in your environment in a file called requirements.txt. This file can be used to recreate the environment. Obviously, because of the above issue, it will likely contain too many packages.

will install all the packages listed in requirements.txt. If you run this on your computer, in a different environment, this will duplicate your environment, which is fine. But it probably will not work on somebody else’s Mac, or Linux, system, and may not even work on somebody else’s Windows computer.

The solution

The solution is to create a minimal environment, and document it. This is done in two steps:

- Identify the packages that are needed to run your code. There are packages that may help you with this, but in principle, you want to include everything you explicitly

importin your code, and nothing else. This is the minimal environment. - Prune the

requirements.txtfile to only include the packages that are needed to run your code. This will be the file you provide to replicators to recreate your necessary environment, and let the package installers solve all the other dependencies.

The resulting requirements.txt file will contain “pinned” versions of the packages you have, so it will be very precise. Possibly overly precise.

Metadata: Cost

How much does it cost…

- to purchase a Stata/MP-32 license?

- to obtain access for three years to Compustat for China?

- to travel to Norway for in-person access to linked employer-employee data?

- to run a computation with 20,000 core-hours, using

x2iedn.32xlarge(128 CPUs with 4TB of memory)?

How much does it cost…

- Stata/MP-32: $3,295 (2 users)

- Compustat for China? $500,000

- 3 weeks Norway: $3,000

- 20,000 core-hours/

x2iedn.32xlarge: $4168.125

Again…

None of this is AI-specific, but the difficulties may be magnified when using LLMs in research.

How much does it cost…

- To

trainyour model in the cloud? - To run the model on your data once?

- To run the model multiple times to assess variability?

When it costs A LOT

- provide a subsample of your data that can be cheaper/faster to reproduce

- use that subsample to look at robustness yourself

- how does it change when you use different models,

- how does it change when, just before submitting the package, you run it through again on the same model (or what you think is the same model).

Does it need to cost a lot?

run it through on the “best” open source model, and compare the output.

- Is it robust (again)?

- Is it “better”/“worse” (and what metric did you choose)?

Metadata

Metadata

Beyond cost, what else?

- Information on tuning, hyperparameters, etc. - any manual steps!

- Seeds for pRNG and information if they work!

Metadata

Beyond cost, what else?

- Information on tuning, hyperparameters, etc. - any manual steps!

- Seeds for pRNG and information if they work!

- Variability and what replicators should expect

Conclusion

AI and LLMs are not special

… when it comes to reproducibility

But difficulties are magnified

… compared to the average difficulty in economics papers

Solutions

- Computational empathy

- Remember your own difficulties in getting this to work

- Now put yourself in others’ computer (shoes)

- Be very clear about what is needed - you are cutting edge, others may not be!

Solutions

- Be precise about versions

- of input data (including RAG/training/fine-tuning)

- of software used (Python, libraries, but also models - do not use “latest”!)

Solutions

- Include all code

- that includes prompts, intermediate responses

- even if data are not included

Solutions

- Include all metadata

- fix random seeds, where possible

- hyperparameters, temperature, or whatever it is called

- prompts could be considered metadata

- Understand and explain when some parameters cannot be controlled.

Solutions

- Include data where possible

- licenses

- size

- intermediate data where useful/time-consuming

but: - licenses! - privacy!

Solutions

- Consider how and where to preserve

- industry repositories may be fine for sharing

- academic repositories (Zenodo, Dataverse) handle preservation

- Toolkit is still in its infancy for the preservation of large data (\(>=\) 200GB)

Solutions

- Use existing resources

- Template README (presentation) as guidance

- Self-check your code (presentation)

Questions

This presentation

Useful links

- GitHub

- Lars Vilhuber

- Presentation QR Code:

- Last Run on 2026-06-09

Appendix

What did the AI say?

What did Claude say?

A presentation on the reproducibility of AI-based research should address several key areas to ensure a comprehensive understanding of the topic. Here are some important points to consider:1. **Introduction to Reproducibility**:

- Define reproducibility in the context of AI research.

- Explain why reproducibility is crucial for scientific integrity, validation, and progress.2. **Challenges in Reproducibility**:

- Discuss common challenges such as lack of access to data, proprietary algorithms, and insufficient documentation.

- Highlight issues related to computational resources and environment dependencies.3. **Data Accessibility**:

- Emphasize the importance of open datasets and the challenges of data privacy and security.

- Discuss strategies for sharing data, such as anonymization and data licensing.4. **Algorithm and Model Transparency**:

- Address the need for sharing model architectures, hyperparameters, and training procedures.

- Discuss the role of open-source software and platforms in promoting transparency.5. **Documentation and Reporting Standards**:

- Highlight the importance of thorough documentation, including code comments, readme files, and research papers.

- Discuss existing standards and guidelines, such as those from journals or conferences, for reporting AI research.6. **Tools and Platforms for Reproducibility**:

- Introduce tools and platforms that facilitate reproducibility, such as version control systems (e.g., Git), containerization (e.g., Docker), and workflow management systems.

- Discuss the role of cloud computing in providing scalable and reproducible environments.7. **Case Studies and Examples**:

- Present examples of successful reproducibility efforts in AI research.

- Discuss lessons learned from reproducibility failures and how they were addressed.8. **Community and Collaboration**:

- Highlight the role of the research community in fostering a culture of reproducibility.

- Discuss collaborative efforts, such as reproducibility challenges and workshops.9. **Ethical and Legal Considerations**:

- Address ethical concerns related to data sharing and model transparency.

- Discuss legal implications, such as intellectual property rights and compliance with regulations.10. **Future Directions and Recommendations**:

- Suggest ways to improve reproducibility in AI research, such as developing better standards, incentives, and educational resources.

- Discuss the potential impact of emerging technologies and methodologies on reproducibility.11. **Conclusion**:

- Summarize the key points discussed.

- Reinforce the importance of reproducibility for advancing AI research and its applications.By covering these areas, the presentation can provide a well-rounded view of the challenges and solutions related to the reproducibility of AI-based research.What did OpenAI say?

A presentation on the reproducibility of AI-based research should address several key areas to ensure a comprehensive understanding of the topic. Here are some important points to consider:1. **Introduction to Reproducibility**:

- Define reproducibility in the context of AI research.

- Explain why reproducibility is crucial for scientific integrity, validation, and progress.2. **Challenges in Reproducibility**:

- Discuss common challenges such as lack of access to data, proprietary algorithms, and insufficient documentation.

- Highlight issues related to computational resources and environment dependencies.3. **Data Accessibility**:

- Emphasize the importance of open datasets and the challenges of data privacy and security.

- Discuss strategies for sharing data, such as anonymization and data licensing.4. **Algorithm and Model Transparency**:

- Address the need for sharing model architectures, hyperparameters, and training procedures.

- Discuss the role of open-source software and platforms in promoting transparency.5. **Documentation and Reporting Standards**:

- Highlight the importance of thorough documentation, including code comments, readme files, and research papers.

- Discuss existing standards and guidelines, such as those from journals or conferences, for reporting AI research.6. **Tools and Platforms for Reproducibility**:

- Introduce tools and platforms that facilitate reproducibility, such as version control systems (e.g., Git), containerization (e.g., Docker), and workflow management systems.

- Discuss the role of cloud computing in providing scalable and reproducible environments.7. **Case Studies and Examples**:

- Present examples of successful reproducibility efforts in AI research.

- Discuss lessons learned from reproducibility failures and how they were addressed.8. **Community and Collaboration**:

- Highlight the role of the research community in fostering a culture of reproducibility.

- Discuss collaborative efforts, such as reproducibility challenges and workshops.9. **Ethical and Legal Considerations**:

- Address ethical concerns related to data sharing and model transparency.

- Discuss legal implications, such as intellectual property rights and compliance with regulations.10. **Future Directions and Recommendations**:

- Suggest ways to improve reproducibility in AI research, such as developing better standards, incentives, and educational resources.

- Discuss the potential impact of emerging technologies and methodologies on reproducibility.11. **Conclusion**:

- Summarize the key points discussed.

- Reinforce the importance of reproducibility for advancing AI research and its applications.By covering these areas, the presentation can provide a well-rounded view of the challenges and solutions related to the reproducibility of AI-based research.What did Gemini say?

A presentation on the reproducibility of AI-based research should address a multifaceted issue, covering both the challenges and potential solutions. Here's a breakdown of key areas to consider:**1. Defining Reproducibility in the Context of AI:*** **What does reproducibility mean in AI?** Differentiate between:

* **Replication:** Re-running the same code on the same data and getting the same results.

* **Reproduction:** Re-implementing the method from scratch based on the paper and getting similar results.

* **Robustness:** Evaluating the model's performance on different datasets or under different conditions.

* **Why is reproducibility important?** Highlight the benefits:

* **Scientific validity:** Ensuring the reliability and trustworthiness of research findings.

* **Progress and innovation:** Building upon existing work and accelerating advancements.

* **Transparency and accountability:** Allowing for scrutiny and identification of potential biases or errors.

* **Practical applications:** Facilitating the deployment and adoption of AI models in real-world scenarios.**2. Challenges to Reproducibility in AI:*** **Code and Implementation:**

* **Lack of code availability:** Papers often lack publicly available code or provide incomplete/unusable code.

* **Poor code quality:** Unstructured, undocumented, or hard-to-understand code.

* **Dependency issues:** Incompatible library versions, operating systems, or hardware.

* **Hidden implementation details:** Crucial parameters or preprocessing steps not explicitly mentioned in the paper.

* **Data:**

* **Data unavailability:** Datasets are often proprietary, sensitive, or difficult to access.

* **Data preprocessing:** Inconsistent or undocumented data cleaning, transformation, or augmentation techniques.

* **Data versioning:** Lack of clarity on which version of the dataset was used.

* **Data bias:** Datasets may contain biases that affect the model's performance and generalizability.

* **Computational Environment:**

* **Hardware differences:** Variations in CPU, GPU, and memory can impact results.

* **Software environment:** Differences in operating systems, libraries, and drivers.

* **Randomness:** The use of random seeds and initialization can lead to variations in results.

* **Methodological Issues:**

* **Incomplete descriptions:** Lack of detail in the paper about the experimental setup, hyperparameters, or evaluation metrics.

* **Cherry-picking results:** Reporting only the best results and ignoring less favorable outcomes.

* **Lack of statistical rigor:** Insufficient statistical analysis or inappropriate use of statistical tests.

* **Human Factors:**

* **Time constraints:** Researchers may lack the time or resources to properly document their work.

* **Incentives:** The current academic system may not adequately reward reproducible research.

* **Lack of awareness:** Researchers may not be fully aware of the importance of reproducibility or best practices.**3. Solutions and Best Practices for Improving Reproducibility:*** **Code Sharing and Version Control:**

* **Public repositories:** Using platforms like GitHub, GitLab, or Bitbucket to share code.

* **Version control:** Employing Git to track changes and manage different versions of the code.

* **Clear documentation:** Providing comprehensive README files and comments within the code.

* **Data Management:**

* **Public datasets:** Utilizing publicly available datasets whenever possible.

* **Data sharing platforms:** Using platforms like Kaggle or Zenodo to share datasets.

* **Data provenance:** Documenting the origin, processing, and versioning of the data.

* **Data anonymization:** Ensuring the privacy and security of sensitive data.

* **Computational Environment Management:**

* **Containerization:** Using Docker or Singularity to create reproducible environments.

* **Virtual environments:** Using tools like conda or venv to manage dependencies.

* **Configuration files:** Storing all necessary parameters and settings in configuration files.

* **Methodological Transparency:**

* **Detailed descriptions:** Providing comprehensive descriptions of the experimental setup, hyperparameters, and evaluation metrics.

* **Open access publications:** Publishing research in open access journals or repositories.

* **Pre-registration:** Registering research plans before conducting experiments.

* **Statistical rigor:** Employing appropriate statistical methods and reporting confidence intervals.

* **Community Efforts and Tools:**

* **Reproducibility checklists:** Using checklists to ensure that all necessary steps are taken.

* **Reproducibility badges:** Awarding badges to papers that meet certain reproducibility criteria.

* **Reproducibility workshops and tutorials:** Providing training and resources to researchers.

* **Open-source tools and libraries:** Developing and using tools that facilitate reproducible research.**4. Call to Action:*** **Encourage researchers to adopt best practices.**

* **Promote the use of open-source tools and platforms.**

* **Advocate for changes in the academic system to incentivize reproducible research.**

* **Raise awareness about the importance of reproducibility in AI.****Presentation Structure Suggestions:*** **Start with a compelling example** of a reproducibility failure in AI research.

* **Clearly define the problem** and its implications.

* **Present the challenges** in a structured and understandable way.

* **Offer practical solutions** and best practices.

* **Conclude with a call to action** and a positive outlook for the future of reproducible AI research.**Visual Aids:*** **Use clear and concise slides.**

* **Include diagrams and illustrations to explain complex concepts.**

* **Show examples of good and bad code documentation.**

* **Use charts and graphs to visualize data and results.**By addressing these key areas, your presentation will provide a comprehensive overview of the challenges and opportunities surrounding the reproducibility of AI-based research, ultimately contributing to a more robust and trustworthy field. Remember to tailor your presentation to your specific audience and their level of understanding.References

Footnotes

Wikipedia on 2024-12-15.

Source: Red Warning PNG Clipart, CC-BY.

Results computed on Nov 26, 2023 based on a scan of replication packages conducted by Sebastian Kranz. 2023. “Economic Articles with Data”. https://ejd.econ.mathematik.uni-ulm.de/, searching for the words

main,master,makefile,dockerfile,apptainer,singularityin any of the program files in those replication packages. Code not yet integrated into this presentation.Formally, this is true for operating systems as well, and in some cases, the operating system and the programming language interact (for instance, in Python).

Claude, queried on 2024-12-16, see

lars_query_claude.pyandlars-prompt1.txtOpenAI, queried on 2024-12-14, see

lars_query.pyandlars-prompt1.txtGemini, queried on 2024-12-15, see

lars_query_gemini.pyandlars-prompt1.txt